Mar01

Subject: AI Governance, Engineering Accountability, and H2E-Holonomic Integration

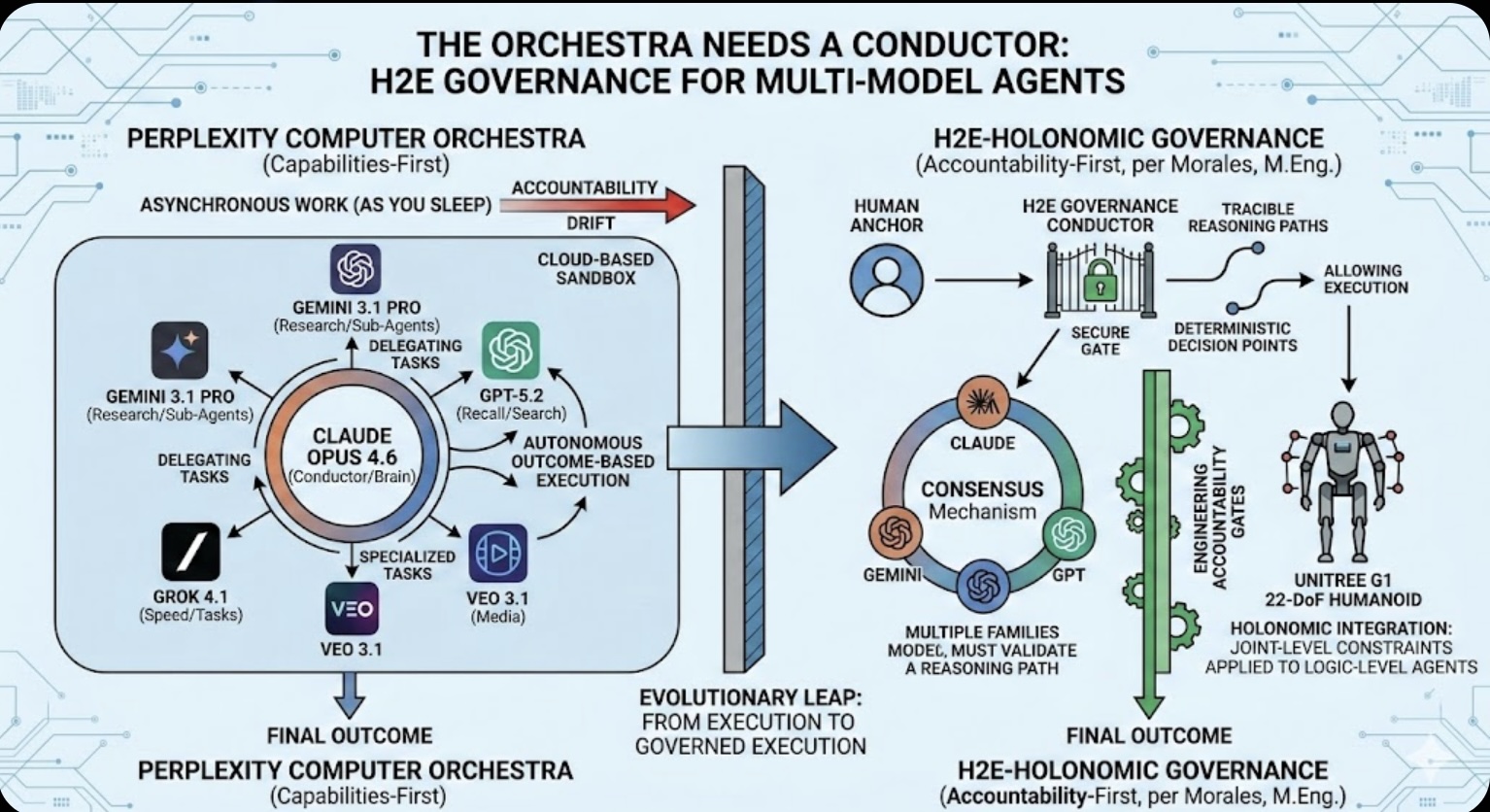

The recent launch of Perplexity Computer (February 25, 2026) represents a paradigm shift from retrieval-augmented generation (RAG) to autonomous agentic orchestration. By unifying 19 specialized models into a single execution engine, Perplexity has solved the "utility" problem. However, this shift from "search engine" to "execution engine" introduces a critical Governance Gap. This paper evaluates the Perplexity "Orchestra" through the lens of the H2E (Human-to-Expert) framework, arguing that without explicit engineering accountability, "digital employees" risk operational drift.

This past week marked a massive shift for the AI landscape. Perplexity is no longer just a way to find information; with the release of Perplexity Computer, they are building a "digital employee." This move is a clear bid to compete with open-source agents like OpenClaw, but within the protected environment of a cloud-based sandbox.

While the web has traditionally functioned as a "READ" environment, CEO Aravind Srinivas has positioned this new system to "read and execute" across the entire digital stack. However, as we move from chatbots to autonomous "Computers," the need for a robust governance framework has never been more urgent.

Perplexity's strategy over the last two months has focused on three pillars of capability:

While technically impressive, the Perplexity "Orchestra" model reveals several friction points when measured against H2E-Holonomic Integration.

Perplexity Computer is a powerful execution engine, but it is incomplete. For AI to be truly "Resilient," it requires a conductor that isn't just a model, but a Governance Protocol. We must ensure that, as we build "digital employees," we engineer accountability from the ground up.

Keywords: Generative AI, Agentic AI, AI Governance

The Roaming Coach Model to Solving Leadership Knowledge Deficits

The Roaming Coach Model to Solving Leadership Knowledge Deficits  UPS’s Profitability Pivot Is Fueling a Labor Fight

UPS’s Profitability Pivot Is Fueling a Labor Fight Bad Emissions Data Is Now a Supply Chain Risk

Bad Emissions Data Is Now a Supply Chain Risk Friday’s Change Reflection Quote - Leadership of Change - Change Leaders Diagnose Strategic Failure

Friday’s Change Reflection Quote - Leadership of Change - Change Leaders Diagnose Strategic Failure The Corix Partners Friday Reading List - May 15, 2026

The Corix Partners Friday Reading List - May 15, 2026