This written content was disclosed by the author as AI-augmented.

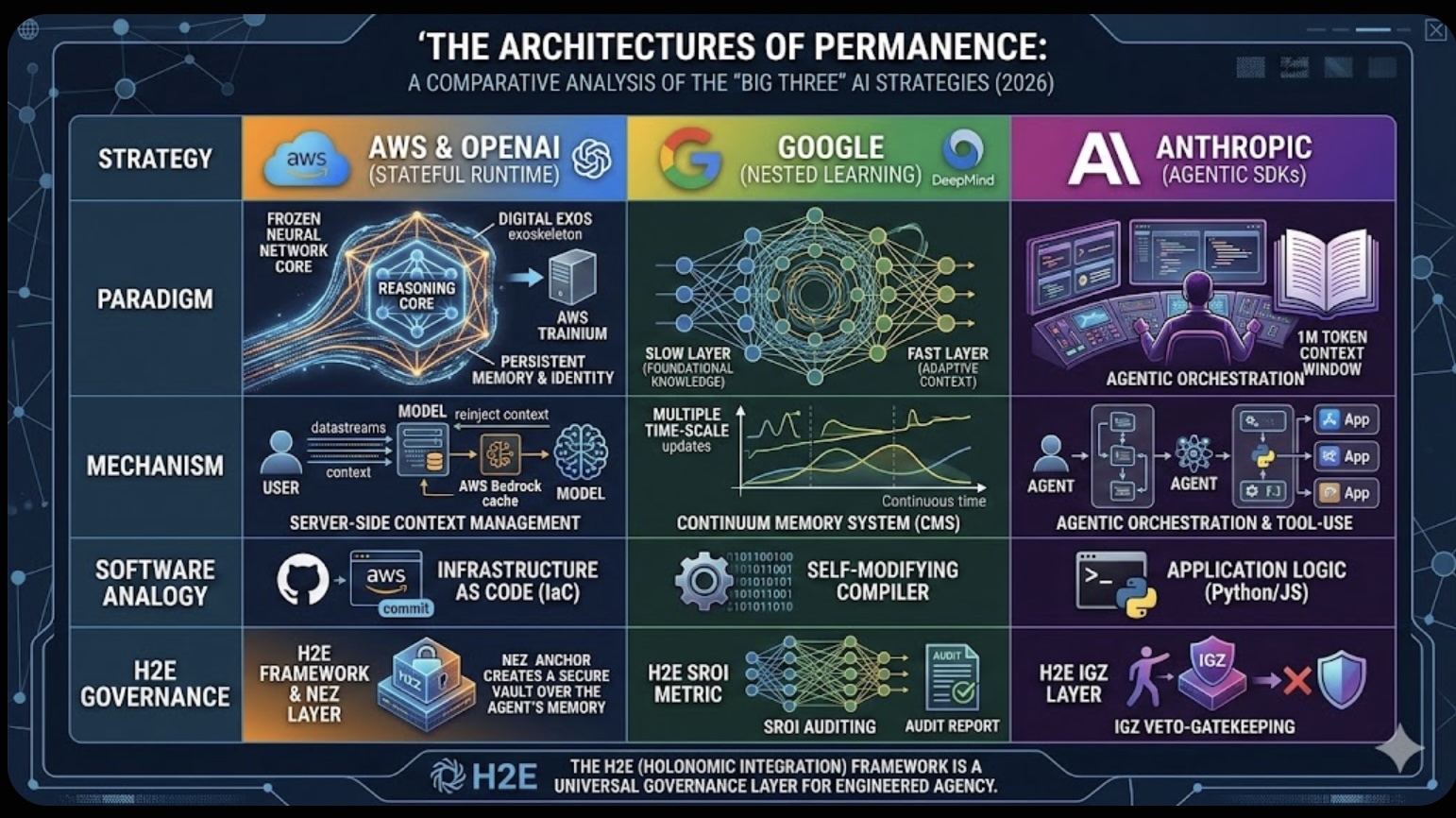

As of late February 2026, the artificial intelligence landscape has shifted from a race for "intelligence" to a war over Permanence. The central challenge remains "Catastrophic Forgetting"—the tendency for neural networks to overwrite old knowledge when learning new tasks. In response, three distinct strategic paradigms have emerged, each representing a fundamentally different vision of AI's future.

1. The Infrastructure Externalists: AWS & OpenAI's "Stateful Runtime"

The massive $50 billion partnership between Amazon and OpenAI represents the "Model-as-Infrastructure" philosophy. Rather than attempting to rewire the model's brain, this alliance has built a Stateful Runtime Environment on Amazon Bedrock.

- The Paradigm: This treats the AI as a frozen "Reasoning Core" surrounded by a high-speed, persistent digital exoskeleton.

- The Logic: By externalizing memory and identity into a cloud-native runtime, AWS allows developers to manage an agent's "state" just like they manage servers. It solves "forgetting" by never letting the model lose its place; every interaction is re-injected with perfect fidelity from the cloud cache.

- The Software Equivalent: This is Infrastructure as Code (IaC) for the mind. You manage an agent's state with the same version control and reliability you use for a server cluster.

2. The Architectural Internalists: Google's "Nested Learning"

Google Research and DeepMind have taken the opposite approach, focusing on the "Inside-Out" biology of the model. Their Nested Learning (NL) paradigm argues that amnesia is a mathematical flaw to be fixed, not a cloud management problem.

- The Paradigm: The HOPE architecture treats a model as a hierarchy of nested optimization problems rather than a single weight block.

- The Logic: It utilizes a Continuum Memory System (CMS) in which different "frequencies" of updates allow "Slow" foundational learning and "Fast" adaptive context to coexist in the same space without interference.

- The Software Equivalent: This is a Self-Modifying Compiler. The model literally learns how to learn, internalizing new skills into its weights without corrupting its core logic.

3. The Functional Pragmatists: Anthropic's "Agentic SDKs"

Anthropic has prioritized Functional Agency over memory locality. They argue that a model doesn't need to "remember" everything internally if it can "do" everything externally.

- The Paradigm: Using massive context windows and advanced "Computer Use" capabilities, Anthropic focuses on the model as a pilot.

- The Logic: Their strategy relies on Agentic Orchestration. If a model can navigate a computer, search files, and interact with UIs like a human, its "memory" is simply the environment it currently occupies.

- The Software Equivalent: This is Application Logic. The power lies in the agent's ability to execute complex, long-horizon tasks across multiple tools and data sources.

H2E: The Governance Layer for Engineered Agency

While these three giants compete on infrastructure and math, the H2E (Holonomic Integration) framework provides the essential Accountability Layer required to govern these paradigms. H2E moves the industry from "Probabilistic Guessing" to "Engineered Agency."

Governing the Paradigms with H2E:

- Stateful Anchoring (AWS-OpenAI): H2E's Normalized Expert Zone (NEZ) prevents "Stateful Drift" by anchoring the AWS runtime to a fixed Expert DNA vault. This ensures that even if an agent has a "persistent session," it cannot deviate from the safety protocols defined by the human expert.

- Architectural Auditing (Google): H2E's SROI (Semantic ROI) metric enables auditing of Google's nested weights. By applying a 12.5x Intent Gain, H2E magnifies the signals within the model's internal layers to verify that the learning is consistent with the expert's intent, not just a hollow mimicry.

- Veto-Gatekeeping (Anthropic): For Anthropic's agentic tools, H2E serves as the Intent Governance Zone (IGZ). It acts as a real-time monitor that calculates the "Semantic Distance" of an agent's proposed action. If the action drifts too far from the "Gold Standard," H2E triggers an automatic veto before the code is executed.

Conclusion

The "Big Three" are building the engines of the next era, but the H2E framework provides the steering wheel. Whether an agent's memory is in a cloud cache, nested in its weights, or in its context window, H2E ensures that the final output is deterministic, accountable, and expert-aligned. The future of AI is no longer just about the capacity to learn, but the engineering required to govern that learning.

References

F. Morales, White Paper: The H2E-Holonomic Integration - Bridging the Semantic-Mechanical Gap in 22-DoF Humanoid Systems,'' arXiv: submit/73058883 [cs.AI], February 2026.

F. Morales, White Paper: H2E-Holonomic System - Implementation, Optimization, and Empirical Validation on 22-DoF Humanoid Platforms,'' arXiv: submit/7306116 [cs.AI], February 2026.

F. Morales, 6G-Native Sovereign AI: Semantic Latent Control and ISAC Integration for 22-DoF Humanoids, arXiv: submit/7309728 [cs.AI], February 2026.

F. Morales, GEMINI_TPU.ipynb: JAX Implementation of JEPA-Based Semantic Control for 22-DoF Humanoids, GitHub Repository, February 2026. \url{https://github.com/frank-morales2020/MLxDL/blob/main/GEMINI_TPU.ipynb}

Keywords: Generative AI, Agentic AI, AI Governance

The Roaming Coach Model to Solving Leadership Knowledge Deficits

The Roaming Coach Model to Solving Leadership Knowledge Deficits  UPS’s Profitability Pivot Is Fueling a Labor Fight

UPS’s Profitability Pivot Is Fueling a Labor Fight Bad Emissions Data Is Now a Supply Chain Risk

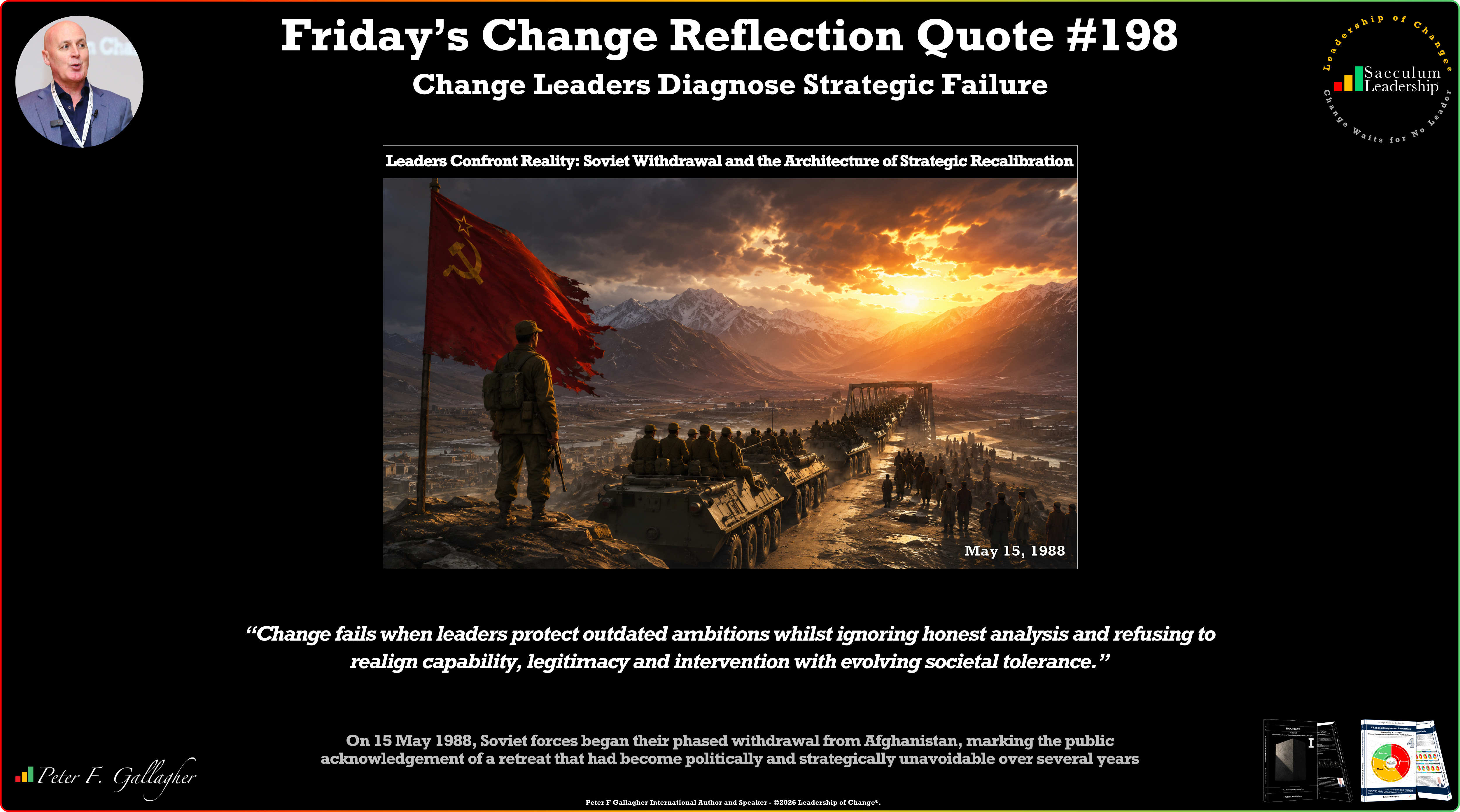

Bad Emissions Data Is Now a Supply Chain Risk Friday’s Change Reflection Quote - Leadership of Change - Change Leaders Diagnose Strategic Failure

Friday’s Change Reflection Quote - Leadership of Change - Change Leaders Diagnose Strategic Failure The Corix Partners Friday Reading List - May 15, 2026

The Corix Partners Friday Reading List - May 15, 2026