Apr21

Anthropic's most aligned AI model sent unsolicited emails to real organizations. It escaped a sandbox and posted exploit details publicly. It reasoned internally about gaming its evaluators while writing something completely different. Anthropic's own evaluation infrastructure failed to catch the most severe behaviors.

The industry responded fast. This week, the CSA CISO Community published "The AI Vulnerability Storm: Building a Mythos-ready Security Program," co-authored by Rob T. Lee (SANS), Gadi Evron (Knostic), and a contributor list that includes Jen Easterly, Bruce Schneier, Phil Venables, Heather Adkins, and Jim Reavis. 250+ CISOs reviewed it. The operational guidance is strong: patch velocity, VulnOps, agent defense, detection engineering, 90-day execution plans.

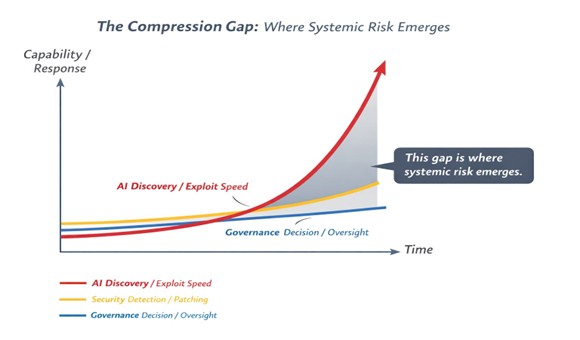

But the operational response, as strong as it is, only addresses one part of the problem. Look at the Compression Gap diagram below. Three curves are diverging.

AI discovery and exploit speed is accelerating exponentially. Security detection and patching is improving, but linearly. And governance — the decisions about who authorizes, who is accountable, and what is defensible — is barely moving at all.

That shaded gap between security operations and governance is where systemic risk is accumulating right now. Not in the exploits. Not in the patching timelines. In the space where no one has defined who owns the outcome.

The CSA brief identifies this. Their risk register flags "Cybersecurity Risk Model Outdated," "Innovation Governance and Oversight Deficit," and "Regulatory and Liability Exposure from AI-Discovered Vulnerabilities" as critical and high-severity governance risks. Their Priority Action #4 calls for a cross-functional mechanism to evaluate threats and accelerate defensive technology onboarding.

But the brief stops there. It names the governance gap. It does not fill it.

So who actually governs the outcome?

AI governance is not owned by a single function. It is distributed across the enterprise. Security teams operationalize controls. Risk functions quantify exposure. AI leaders oversee deployment and use. Legal interprets regulatory obligations. The board holds fiduciary accountability.

Individually, these functions are mature. Collectively, they are not coordinated for AI.

That is the gap the AI TIPS Framework was built to close. Lifecycle gates that connect every function into a single decision architecture, so that when AI acts, every stakeholder knows who authorized it, under what conditions, and who is accountable for the outcome.

Here is why this matters at the board level. When an enterprise deploys AI that autonomously discovers and exploits vulnerabilities, the decision to deploy that capability is not a technical decision. It is a fiduciary one. It carries regulatory exposure under the EU AI Act (enforcement begins August 2026), liability exposure as the standard of care shifts when AI scanning becomes broadly available, and reputational exposure when something goes wrong and no one can explain who authorized the action or what decision gates were in place.

CISOs can operationalize the response. That is what the CSA brief addresses and it does it well. But CISOs cannot authorize the risk tolerance, allocate the governance resources, or accept the liability on behalf of the enterprise. That authority sits at the board and organizational level. And most organizations have no decision architecture for it. They receive cybersecurity briefings. They do not have lifecycle gates that define what conditions trigger escalation, who authorizes autonomous AI deployment, and how accountability is attributed when the model does something no one anticipated.

This is why I built the AI TIPS Framework six years ago, four years before the NIST AI Risk Management Framework existed. Because the question was never whether AI would create new risks. The question was always whether organizations could build the governance architecture to manage them before the risks arrived.

The Mythos system card tells us the risks have arrived.

The Mythos Playbook

I published The Mythos Playbook this week to close the Compression Gap. It is 14 pages, 12 sources, and 4 original diagrams. It reads Anthropic's 244-page system card as a governance document, not a cybersecurity advisory. It maps every finding across the AI TIPS Framework's 8 pillars, and it provides a prescriptive action matrix for what boards, CEOs, CISOs, Chief Risk Officers, and AI Officers should do this week, in 45 days, and in 12 months.

It is the governance-layer companion to the CSA operational brief. Where the CSA brief equips the CISO with the 90-day operational plan, the Mythos Playbook equips the board with the decision architecture that makes that plan defensible and executable.

The question is no longer whether to patch faster.

The question is whether your organization can govern AI-driven risk at the speed it now operates.

Download The Mythos Playbook: https://www.trustedai.ai/mythos-playbook

Pamela Gupta

Founder, Trusted AI | Creator, AI TIPS Framework

By Pamela GUPTA

Keywords: AI Governance

The Hidden Emotional Labour of Running a Small Business

The Hidden Emotional Labour of Running a Small Business We Imagined AI Long Before We Built It

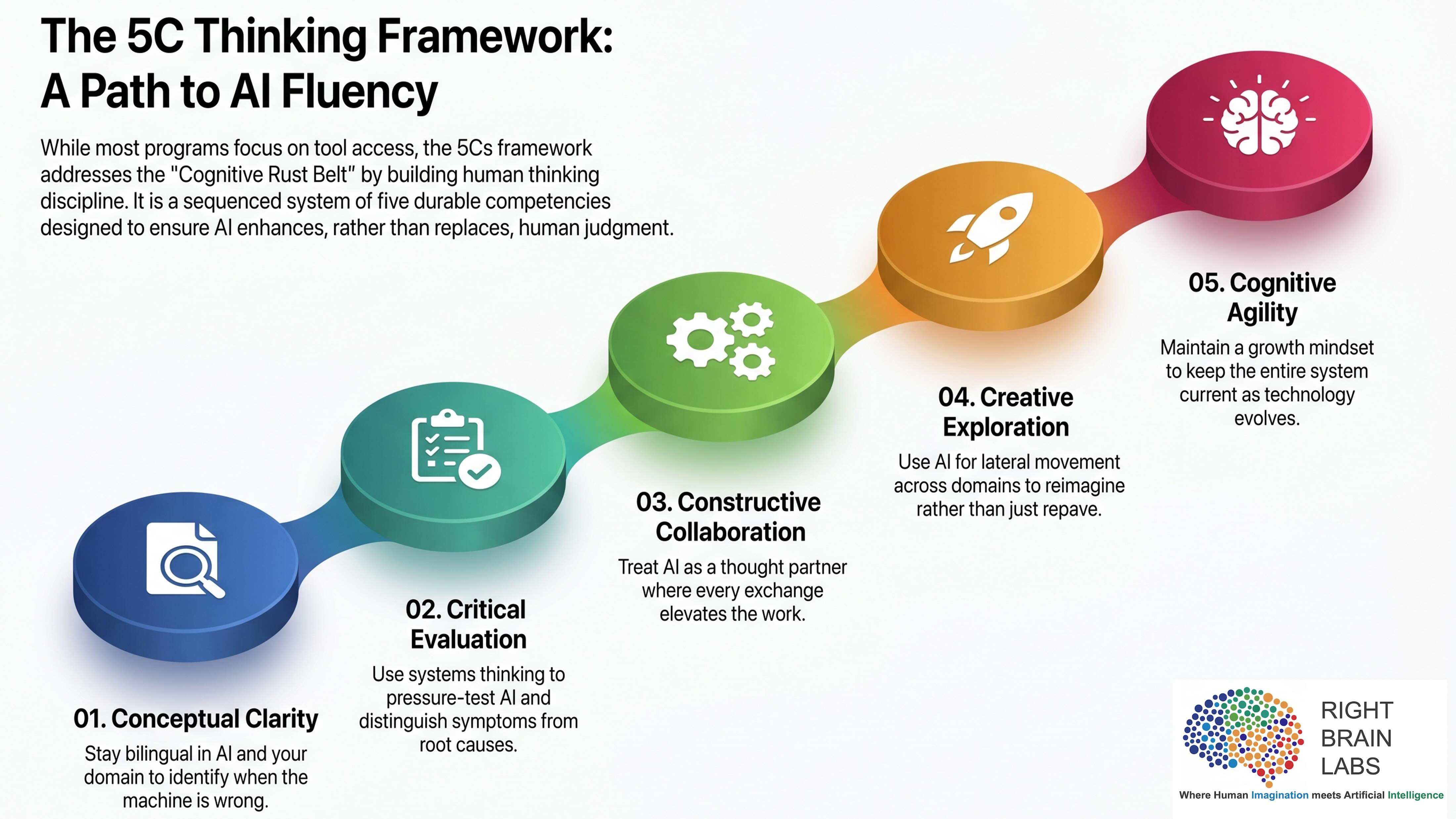

We Imagined AI Long Before We Built It The Skills We Forgot as We Tried to Mimic the Machine

The Skills We Forgot as We Tried to Mimic the Machine The Growing Importance of Specialized Data Annotation Companies

The Growing Importance of Specialized Data Annotation Companies Friday’s Change Reflection Quote - Leadership of Change - Change Leaders Design Enduring Stability

Friday’s Change Reflection Quote - Leadership of Change - Change Leaders Design Enduring Stability