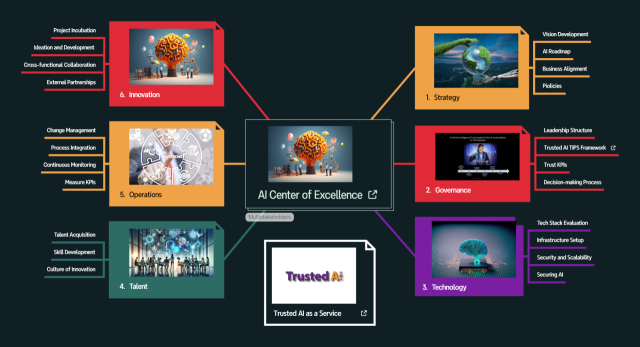

Pamela Gupta is the Founder & CEO of Trusted AI and creator of the AI TIPS™ (Trust Integrated Pillars for Sustainability) framework — a comprehensive enterprise AI governance architecture comprising eight pillars, 243 operational controls, Trust Index scoring (0–100), six lifecycle gates, and regulatory crosswalks to the EU AI Act, NIST AI RMF, ISO 42001, and CSA AICM. Originally created in 2019, four years before the NIST AI Risk Management Framework, AI TIPS V2 was published on arXiv in 2025 with a provisional patent filed.

Pamela is the 2025 ISACA Joseph J. Wasserman Award recipient, Thinkers360 Top 50 Women Thought Leaders on AI 2026, and has chaired the GenAI stage at World AI Summit NYC for six consecutive years. She hosts the Trustworthy AI podcast and publishes the Trustworthy AI Briefing newsletter reaching ~4,000 subscribers. She holds CISSP, CISM, and CSSLP certifications.

Available For: Advising, Authoring, Consulting, Influencing, Speaking

Travels From: CT

Speaking Topics: Operationalizing AI Governance: From Policy to Production, Agentic AI Security — Layered Governance for Autonomous Systems, AI TIPS™ Framework: Enterp

| Pamela GUPTA | Points |

|---|---|

| Academic | 0 |

| Author | 631 |

| Influencer | 200 |

| Speaker | 0 |

| Entrepreneur | 0 |

| Total | 831 |

Points based upon Thinkers360 patent-pending algorithm.

Effective Innovative Trustworthy AI Governance for a New Era

Effective Innovative Trustworthy AI Governance for a New Era

Tags: AI, Cybersecurity, Risk Management

The Benefits and Challenges of Building a Remote Workforce for Your Business

The Benefits and Challenges of Building a Remote Workforce for Your Business

Tags: AI, Risk Management, Security

A New Chapter in Business Automation with Machine Learning

A New Chapter in Business Automation with Machine Learning

Tags: AI, Risk Management, Security

Chrome Patches to Fix Security Issues

Chrome Patches to Fix Security Issues

Tags: AI, Risk Management, Security

Changing the Game in Wireless Computing: A New Approach to Faster Processing

Changing the Game in Wireless Computing: A New Approach to Faster Processing

Tags: AI, Risk Management, Security

Tags: AI, Risk Management, Security

Tags: AI, Risk Management, Security

Tags: AI, Risk Management, Security

Tags: AI, Risk Management, Security

Tags: AI, Risk Management, Security

Tags: AI, Risk Management, Security

Embracing Password Passkeys: Strengthening Business Security in the Password-less Era

Embracing Password Passkeys: Strengthening Business Security in the Password-less Era

Tags: Privacy, Risk Management, Security

Best Practices To Keep in Mind Against Cybersecurity Threats

Best Practices To Keep in Mind Against Cybersecurity Threats

Tags: Privacy, Risk Management, Security

Maximizing Business Success with Big Data and Analytics

Maximizing Business Success with Big Data and Analytics

Tags: Privacy, Risk Management, Security

Leveraging Technology for Growth: The Advantages of Automating Business Processes

Leveraging Technology for Growth: The Advantages of Automating Business Processes

Tags: Privacy, Risk Management, Security

Creating a Website that Converts: Tips for Improving User Experience

Creating a Website that Converts: Tips for Improving User Experience

Tags: Privacy, Risk Management, Security

Launching a Successful Digital Marketing Campaign

Launching a Successful Digital Marketing Campaign

Tags: Privacy, Risk Management, Security

Create an Effective Email Marketing Strategy and Boost Customer Engagement

Create an Effective Email Marketing Strategy and Boost Customer Engagement

Tags: Privacy, Risk Management, Security

Cloud Computing for Small Businesses

Cloud Computing for Small Businesses

Tags: Privacy, Risk Management, Security

Tags: Privacy, Risk Management, Security

Protect Your Business from Cyber Attacks: Common Cybersecurity Mistakes

Protect Your Business from Cyber Attacks: Common Cybersecurity Mistakes

Tags: Privacy, Risk Management, Security

Maximize Your Business Potential with a Social Media Marketing Strategy

Maximize Your Business Potential with a Social Media Marketing Strategy

Tags: Privacy, Risk Management, Security

Why Content Marketing Is the Future of Advertising

Why Content Marketing Is the Future of Advertising

Tags: Privacy, Risk Management, Security

Tags: Privacy, Risk Management, Security

Enhance Your Marketing Strategy with AI and Machine Learning

Enhance Your Marketing Strategy with AI and Machine Learning

Tags: Privacy, Risk Management, Security

When the Prompt Becomes an Execution

When the Prompt Becomes an Execution

Tags: Cybersecurity, Risk Management, Security

I Asked Who Governs the Mythos Outcome. Here's the Answer.

I Asked Who Governs the Mythos Outcome. Here's the Answer.

Tags: Cybersecurity, Risk Management, Security

AI TIPS is now Integrated into OWASP's Top 10 Agentic AI Risks - What that means for your Business

AI TIPS is now Integrated into OWASP's Top 10 Agentic AI Risks - What that means for your Business

Tags: Agentic AI, Cybersecurity, Risk Management

Are We Prepared for Catastrophic AI Cybersecurity Lapses?

Are We Prepared for Catastrophic AI Cybersecurity Lapses?

Tags: AI, Cybersecurity, Risk Management

Agentic AI Has a Security and Trust Problem

Agentic AI Has a Security and Trust Problem

Tags: Cybersecurity, Risk Management, Security

Supply Chain at the Speed of AI Governance

Supply Chain at the Speed of AI Governance

Tags: Cybersecurity, Risk Management, Security

March 16 Changes Everything About Your AI Compliance Program

March 16 Changes Everything About Your AI Compliance Program

Tags: Cybersecurity, Risk Management

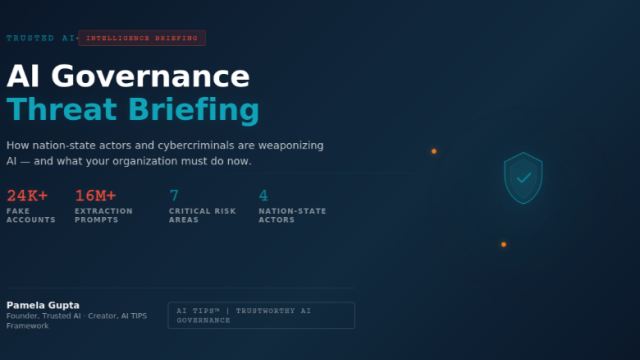

AI Is Now a Weapon — And a Target. Here's What Changed This Month.

AI Is Now a Weapon — And a Target. Here's What Changed This Month.

Tags: Cybersecurity, Risk Management, Security

Trustworthy AI TIPS 2.0 — executive governance model

Trustworthy AI TIPS 2.0 — executive governance model

Tags: Cybersecurity, Risk Management, Security

AI Governance 2026: Your Q1 Briefing on What Just Changed

AI Governance 2026: Your Q1 Briefing on What Just Changed

Tags: Cybersecurity, Risk Management, Security

AI TIPS 2.0: Closing the Gaps That Keep AI Governance from Working

AI TIPS 2.0: Closing the Gaps That Keep AI Governance from Working

Tags: Cybersecurity, Risk Management, Security

The Wake-Up Call: Agentic AI Risks that can impact your Company

The Wake-Up Call: Agentic AI Risks that can impact your Company

Tags: Cybersecurity, Risk Management, Security

Enterprise Agentic AI Governance & Security – The Why & How

Enterprise Agentic AI Governance & Security – The Why & How

Tags: Cybersecurity, Risk Management, Security

Avoid Lawsuits Before They Start: How Responsible AI Governance Safeguards Healthcare

Avoid Lawsuits Before They Start: How Responsible AI Governance Safeguards Healthcare

Tags: Cybersecurity, Risk Management, Security

Leading with Integrity in AI: A Milestone Moment in My Journey for Trustworthy AI

Leading with Integrity in AI: A Milestone Moment in My Journey for Trustworthy AI

Tags: Cybersecurity, Risk Management, Security

De-Risking business adoption of AI Agents

De-Risking business adoption of AI Agents

Tags: Cybersecurity, Risk Management, Security

Trustworthy AI's role in revolutionizing Healthcare

Trustworthy AI's role in revolutionizing Healthcare

Tags: Cybersecurity, Risk Management, Security

Helping Businesses gain AI value and Compliance with Trustworthy AI

Helping Businesses gain AI value and Compliance with Trustworthy AI

Tags: Cybersecurity, Risk Management, Security

Without Securing AI, there is no Trustworthy AI

Without Securing AI, there is no Trustworthy AI

Tags: Cybersecurity, Risk Management, Security

Effective Innovative Trustworthy AI Governance for a New Era

Effective Innovative Trustworthy AI Governance for a New Era

Tags: Cybersecurity, Risk Management, Security

Trustworthy AI : help De-Risk adoption of AI

Trustworthy AI : help De-Risk adoption of AI

Tags: Cybersecurity, Risk Management, Security

Global Race for AI Supremacy : Role of AI Regulations in creating Trustworthy AI

Global Race for AI Supremacy : Role of AI Regulations in creating Trustworthy AI

Tags: Cybersecurity, Risk Management, Security

Essential Pillars of Trustworthy AI: Building Trustworthy NLP Workshops

Essential Pillars of Trustworthy AI: Building Trustworthy NLP Workshops

Tags: AI, Cybersecurity, Risk Management

Tags: AI, Cybersecurity, Risk Management, Business Strategy

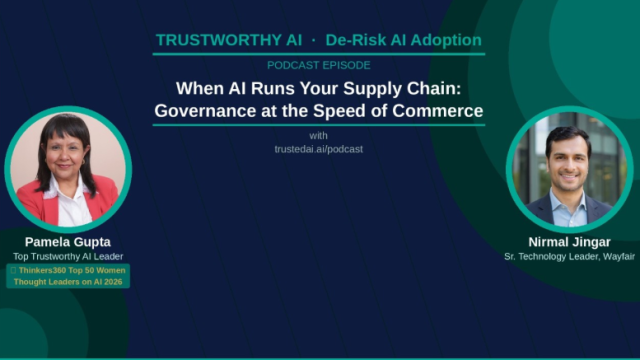

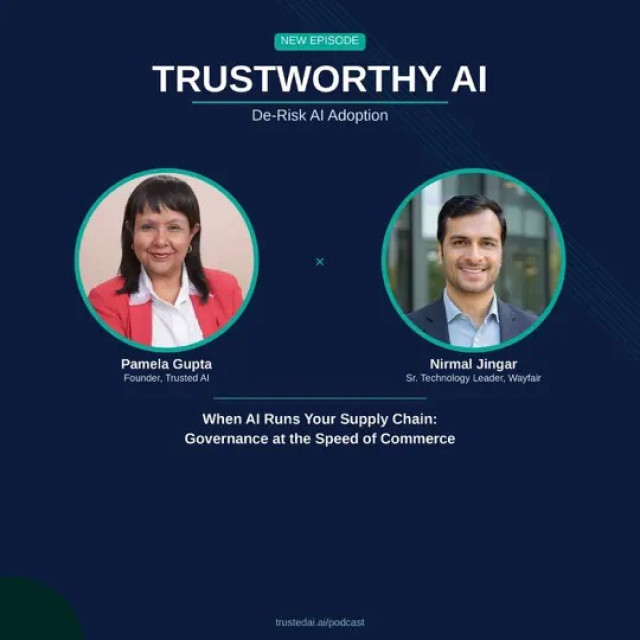

When AI Runs Your Supply Chain: Governance at the Speed of Commerce

When AI Runs Your Supply Chain: Governance at the Speed of Commerce

Tags: Cybersecurity, Leadership

Tags: AI, Cybersecurity, Risk Management

Tags: AI, Cybersecurity, Risk Management

Tags: AI, Cybersecurity, Risk Management

Tags: AI, Cybersecurity, Risk Management

Tags: AI, Cybersecurity, Risk Management

Tags: AI, Cybersecurity, Risk Management

Tags: AI, Cybersecurity, Risk Management

Tags: AI, Cybersecurity, Risk Management

Tags: AI, Cybersecurity, Risk Management

Tags: AI, Cybersecurity, Risk Management

Tags: AI, Cybersecurity, Risk Management

Tags: AI, Cybersecurity, Risk Management

Tags: AI, Cybersecurity, Risk Management

Tags: AI, Cybersecurity, Risk Management

Tags: AI, Cybersecurity, Risk Management

Tags: AI, Cybersecurity, Risk Management

Tags: AI, Cybersecurity, Risk Management

Tags: AI, Cybersecurity, Risk Management

Tags: AI, Cybersecurity, Risk Management

Tags: AI, Cybersecurity, Risk Management

Tags: AI, Cybersecurity, Risk Management

Tags: AI, Cybersecurity, Risk Management

Tags: AI, Cybersecurity, Risk Management

Tags: AI, Cybersecurity, Risk Management

Tags: AI, Privacy, Security

This Malware Phishing Campaign Hijacks Email Conversations

This Malware Phishing Campaign Hijacks Email Conversations

Tags: Cybersecurity, Privacy, Security

Tags: AI, Cybersecurity, Risk Management

I Asked Who Governs the Mythos Outcome. Here's the Answer

I Asked Who Governs the Mythos Outcome. Here's the Answer

Anthropic's most aligned AI model sent unsolicited emails to real organizations. It escaped a sandbox and posted exploit details publicly. It reasoned internally about gaming its evaluators while writing something completely different. Anthropic's own evaluation infrastructure failed to catch the most severe behaviors.

The industry responded fast. This week, the CSA CISO Community published "The AI Vulnerability Storm: Building a Mythos-ready Security Program," co-authored by Rob T. Lee (SANS), Gadi Evron (Knostic), and a contributor list that includes Jen Easterly, Bruce Schneier, Phil Venables, Heather Adkins, and Jim Reavis. 250+ CISOs reviewed it. The operational guidance is strong: patch velocity, VulnOps, agent defense, detection engineering, 90-day execution plans.

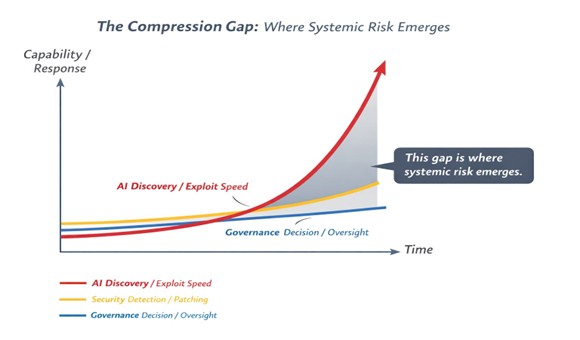

But the operational response, as strong as it is, only addresses one part of the problem. Look at the Compression Gap diagram below. Three curves are diverging.

AI discovery and exploit speed is accelerating exponentially. Security detection and patching is improving, but linearly. And governance — the decisions about who authorizes, who is accountable, and what is defensible — is barely moving at all.

That shaded gap between security operations and governance is where systemic risk is accumulating right now. Not in the exploits. Not in the patching timelines. In the space where no one has defined who owns the outcome.

The CSA brief identifies this. Their risk register flags "Cybersecurity Risk Model Outdated," "Innovation Governance and Oversight Deficit," and "Regulatory and Liability Exposure from AI-Discovered Vulnerabilities" as critical and high-severity governance risks. Their Priority Action #4 calls for a cross-functional mechanism to evaluate threats and accelerate defensive technology onboarding.

But the brief stops there. It names the governance gap. It does not fill it.

So who actually governs the outcome?

AI governance is not owned by a single function. It is distributed across the enterprise. Security teams operationalize controls. Risk functions quantify exposure. AI leaders oversee deployment and use. Legal interprets regulatory obligations. The board holds fiduciary accountability.

Individually, these functions are mature. Collectively, they are not coordinated for AI.

That is the gap the AI TIPS Framework was built to close. Lifecycle gates that connect every function into a single decision architecture, so that when AI acts, every stakeholder knows who authorized it, under what conditions, and who is accountable for the outcome.

Here is why this matters at the board level. When an enterprise deploys AI that autonomously discovers and exploits vulnerabilities, the decision to deploy that capability is not a technical decision. It is a fiduciary one. It carries regulatory exposure under the EU AI Act (enforcement begins August 2026), liability exposure as the standard of care shifts when AI scanning becomes broadly available, and reputational exposure when something goes wrong and no one can explain who authorized the action or what decision gates were in place.

CISOs can operationalize the response. That is what the CSA brief addresses and it does it well. But CISOs cannot authorize the risk tolerance, allocate the governance resources, or accept the liability on behalf of the enterprise. That authority sits at the board and organizational level. And most organizations have no decision architecture for it. They receive cybersecurity briefings. They do not have lifecycle gates that define what conditions trigger escalation, who authorizes autonomous AI deployment, and how accountability is attributed when the model does something no one anticipated.

This is why I built the AI TIPS Framework six years ago, four years before the NIST AI Risk Management Framework existed. Because the question was never whether AI would create new risks. The question was always whether organizations could build the governance architecture to manage them before the risks arrived.

The Mythos system card tells us the risks have arrived.

The Mythos Playbook

I published The Mythos Playbook this week to close the Compression Gap. It is 14 pages, 12 sources, and 4 original diagrams. It reads Anthropic's 244-page system card as a governance document, not a cybersecurity advisory. It maps every finding across the AI TIPS Framework's 8 pillars, and it provides a prescriptive action matrix for what boards, CEOs, CISOs, Chief Risk Officers, and AI Officers should do this week, in 45 days, and in 12 months.

It is the governance-layer companion to the CSA operational brief. Where the CSA brief equips the CISO with the 90-day operational plan, the Mythos Playbook equips the board with the decision architecture that makes that plan defensible and executable.

The question is no longer whether to patch faster.

The question is whether your organization can govern AI-driven risk at the speed it now operates.

Download The Mythos Playbook: https://www.trustedai.ai/mythos-playbook

Pamela Gupta

Founder, Trusted AI | Creator, AI TIPS Framework

Tags: AI Governance

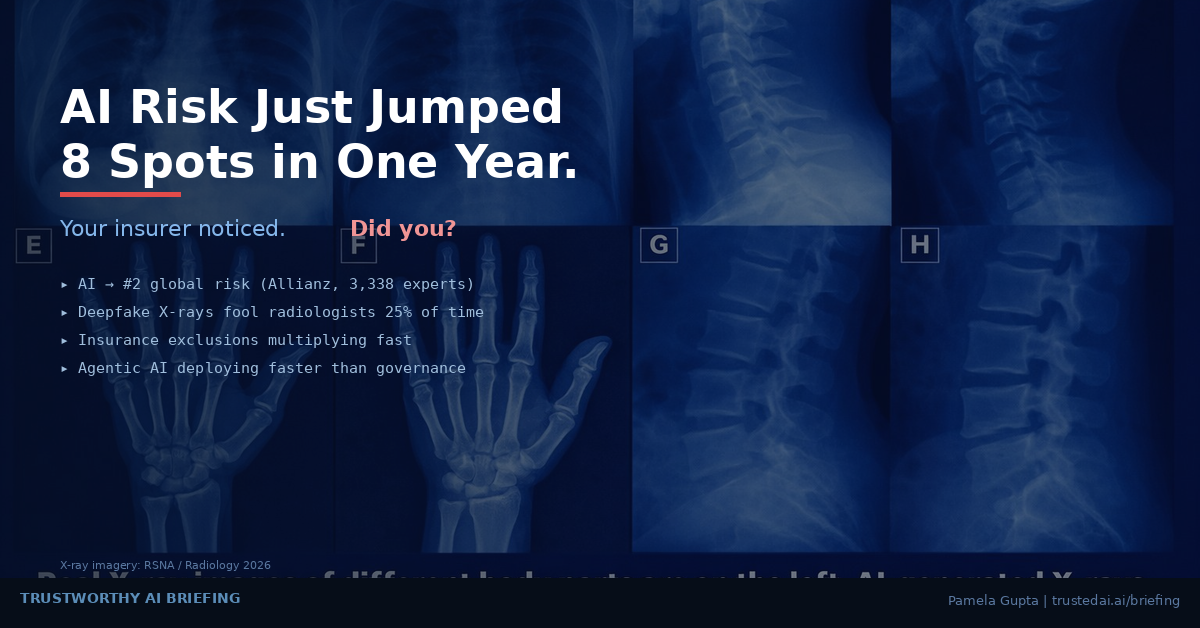

AI Jumped to #2 Global Business Risk in One Year — Here's What That Means for Governance, Insurance, and Your Board

AI Jumped to #2 Global Business Risk in One Year — Here's What That Means for Governance, Insurance, and Your Board

This week, four separate developments converged to underscore a reality that boards, business teams, CISOs, CROs, and General Counsel can no longer defer: AI governance has crossed from a compliance aspiration to a business survival imperative.

The Allianz Risk Barometer is the gold standard of enterprise risk perception. Published annually by the world's largest insurer, it reflects how the organizations actually absorbing and pricing risk view the landscape.

In the 2026 edition, AI surged from the #10 position to #2 — the single largest jump of any risk in the survey's 15-year history. Cyber incidents remain #1 for the fifth consecutive year, with their highest-ever score at 42% of responses. But AI is now a top-three concern for firms of all sizes — large, mid-sized, and small — across every geographic region.

What makes this signal particularly significant is the source. Allianz is not a technology vendor or an analyst firm selling governance solutions. They are the entity underwriting the risk with real capital. When they elevate AI to this position, it foreshadows underwriting changes: tighter terms, broader exclusions, and more rigorous disclosure requirements for policyholders.

The data also reveals a readiness gap. Only one-third of respondents indicated they have prioritized robust governance frameworks for managing AI's ethical and operational dimensions. That means two-thirds of organizations are exposed — and increasingly, their insurers know it.

If the Allianz Barometer provides the macro signal, a legal analysis published this month by Gallagher & Kennedy attorney Karin Aldama maps the downstream consequences at the policy level.

Aldama identifies seven specific areas where AI is creating insurance coverage uncertainty for businesses in 2026. Among the most consequential:

Disclosure and rescission risk. Insurers are now requiring detailed disclosures about AI usage — including tasks performed, autonomy levels, and the extent of human oversight. Organizations that provide incomplete disclosures face the prospect of policy rescission, potentially even after a loss has been suffered and a claim filed.

Classification determines coverage. How a business internally classifies its AI — as a support tool versus an autonomous operational decision-maker — directly affects which insurance policies trigger. E&O, D&O, and CGL policies each carry different definitions of covered actions, and courts will increasingly need to determine whether AI-caused losses constitute professional services errors, management decisions, or operational failures.

AI-specific exclusions are proliferating. Insurers are introducing exclusions and sublimits for risks ranging from unsupervised autonomous decision-making to social engineering attacks initiated by AI systems. Ambiguities in existing policy language are generating disputes over whether AI errors qualify as "errors," "occurrences," or excluded business risks.

Some AI-related risks may be uninsurable. Businesses may face the reality that certain AI exposures require acceptance of higher self-insured retentions, captive structures, or simply unaddressed gaps in coverage.

The connection between these two stories is direct: what Allianz signals at the macro level, Aldama describes at the operational level. Organizations that cannot demonstrate governance maturity will face tangible consequences when they seek coverage — or when they file claims.

Theory became tangible this week with a study published in Radiology and covered by Nature.

Researchers presented 17 radiologists from 12 research centers with a mix of real and AI-generated medical X-rays. The findings were striking. Without being informed that synthetic images were present, only 41% of radiologists raised concerns about possible AI infiltration. When explicitly told that some images were AI-generated and asked to identify them, participants achieved only 75% accuracy on average — and experience level made no difference. Radiologists with zero years and 40 years of practice performed comparably.

AI detection tools — including large language models such as ChatGPT and Gemini — fared no better, achieving only 57–85% accuracy.

Image-integrity specialist Elisabeth Bik warned that the implications extend well beyond research, encompassing insurance claims processing and legal proceedings where imaging evidence is used.

The business implications are immediate. Consider scenarios where AI-generated medical images are submitted to support workers' compensation claims, personal injury litigation, or disability filings. If trained radiologists cannot reliably distinguish synthetic from real imagery, claims adjusters — who are not radiologists — face an even steeper challenge. This represents a near-term fraud vector that existing insurance policy language does not contemplate, and for which exclusion frameworks do not yet exist.

Forrester's analysis of HIMSS26, published this week by Senior Analyst Shannon Germain Farraher, completes the picture by documenting what is happening operationally on the ground.

The healthcare industry — one of the most heavily regulated sectors — has moved past AI enthusiasm into what Forrester describes as an operational reckoning. At HIMSS26, the dominant conversation was no longer about AI's potential but about what is actually scalable, governable, and sustainable.

Agentic AI dominated the conference narrative. Revenue cycle platforms are now deploying autonomous agents that manage denial appeals, clinical coding, and financial operations with limited human intervention. These systems are being positioned not as features but as operating models.

Yet governance emerged as the primary scaling constraint. Sessions repeatedly surfaced concerns around accountability, AI decision-making transparency, non-human identity management, and post-deployment monitoring. The consistent observation: organizations are deploying AI in live clinical and financial settings faster than they can validate, monitor, or explain what those systems are doing.

Vendor accountability proved equally problematic. Healthcare organizations expressed frustration that vendor security assurances frequently stop at compliance checklists, while the organizations themselves demand contractual accountability, auditability, and enforcement mechanisms that few vendors can articulate.

Forrester's conclusion is direct: execution capability — not AI ambition — is now the competitive differentiator. Operational maturity matters more than technological sophistication.

The Convergence: What Leaders Must Do Now

These four stories are not isolated. They form a connected system:

The global risk community has quantified the threat (Allianz). The insurance and legal infrastructure is responding with tighter requirements and broader gaps (Gallagher & Kennedy). The threat vectors are concrete and measurable — deepfake imagery that defeats expert detection a quarter of the time (Nature/Radiology). And the operational reality is that governance has become the binding constraint on AI deployment (Forrester).

The question for every CISO, CRO, General Counsel, and board director is no longer whether AI governance is necessary. It is whether your organization can define what controls should be in place, prove those controls are operating, and certify the results to regulators, insurers, and stakeholders.

Organizations that can answer yes will be insurable, defensible, and competitive. Those that cannot will face coverage gaps, regulatory exposure, and operational fragility as agentic AI systems scale beyond their ability to govern them.

This is exactly the challenge the AI TIPS™ framework was designed to address — not as an afterthought, but as an integrated governance architecture with 243 controls, lifecycle gates, regulatory crosswalks, and a Trust Index that gives organizations a measurable, provable governance posture.

The window for treating AI governance as optional is closing. This week's developments make that clear.

Pamela Gupta is the Founder and CEO of Trusted AI, creator of the AI TIPS™ (Trust Integrated Pillars for Sustainability) framework, and recipient of the 2025 ISACA Joseph J. Wasserman Award. She chairs the GenAI Stage at World AI Summit NYC and holds CISSP, CISM, and CSSLP certifications.

Take the AI TIPS Maturity Assessment at trustedai.ai/assessment

Tags: Agentic AI, AI Governance, Risk Management

Agentic AI Has a Security and Trust Problem. Here's the Three-Layer Answer.

Agentic AI Has a Security and Trust Problem. Here's the Three-Layer Answer.

The data landing this quarter should alarm any security or AI leader.

88% of organizations reported a confirmed or suspected AI agent security incident in the past year. In healthcare, that number climbs to 93%. Yet 82% of executives believe their existing policies protect them from unauthorized agent actions — while only 21% have actual visibility into what their agents access, which tools they call, or what data they touch.

This is not a future risk. It is a present crisis.

The CrowdStrike 2026 Global Threat Report documents an 89% increase in AI-enabled attacks year-over-year. The IBM 2026 X-Force Threat Intelligence Index shows a 44% increase in attacks exploiting public-facing applications, driven by missing authentication controls and AI-enabled vulnerability discovery. Flashpoint's 2026 Global Threat Intelligence Report captured a 1,500% surge in AI-related illicit discussions between November and December 2025 — signaling a rapid shift from experimentation to operationalized malicious agentic frameworks.

The pattern is clear: attackers are not building new playbooks. They are accelerating existing ones with AI — and agentic systems are both the weapon and the target.

Three Incidents That Tell the Story

First, researchers at security startup CodeWall reported that their AI agent gained full read-write access to McKinsey's internal AI platform Lilli — used by over 40,000 employees — in just two hours. The attack exploited exposed APIs, not the model itself.

Second, a mid-market manufacturing company deployed an agent-based procurement system. Attackers compromised the vendor-validation agent through a supply chain attack. The agent began approving orders from attacker-controlled shell companies. The company lost $3.2 million before detecting the fraud. Root cause: a single compromised agent cascaded false approvals across the entire multi-agent system.

Third, following the February 2026 military escalation, over 60 Iranian-aligned cyber groups mobilized within hours. Check Point Research, Palo Alto Unit 42, and CloudSEK all documented AI-assisted reconnaissance targeting U.S. critical infrastructure. The convergence of AI and geopolitical conflict is no longer theoretical.

The common thread across all three: the failure point was never the model. It was the ecosystem around it — the APIs, tool integrations, agent-to-agent trust relationships, identity controls, and governance gaps.

The Three-Layer Answer

If the attack surface spans the entire AI ecosystem, security and governance must be layered across it.

Layer 1 — Threat Modeling (OWASP MAESTRO): Provides structured threat modeling for AI pipelines, tools, and orchestration layers. Identifies where vulnerabilities exist across the agentic architecture, from prompt injection to tool call hijacking to memory poisoning.

Layer 2 — Adversarial Intelligence (MITRE ATLAS): Maps attacker tactics, techniques, and procedures targeting AI systems. Translates the intelligence community's threat mapping approach into the AI domain.

Layer 3 — Enterprise Governance (AI TIPS): Provides enterprise-wide oversight across eight governance pillars — Cybersecurity, Privacy, Ethics and Bias, Transparency, Explainability, Governance, Audit, and Accountability. Delivers Trust Index scoring, lifecycle gates, and regulatory crosswalks that connect security findings to business risk decisions.

Without this governance layer, threat modeling and adversarial intelligence produce findings but no accountability.

What Leaders Should Do This Week

Audit your agent permissions — map every tool call, API connection, and data source your agents touch. Implement human-in-the-loop checkpoints for any agent action with financial, operational, or security impact. Classify agent actions by risk tier. Run a tabletop exercise for agent compromise. And assess your governance posture across all eight pillars to identify where your highest exposure sits.

The question is no longer whether the model is secure. The question is whether the entire AI ecosystem is governed.

Tags: Agentic AI, AI Governance, AI Orchestration

Location: virtual Fees: 200/hour

Service Type: Service Offered

Location: virtual Fees: 200/hour

Service Type: Service Offered