Mar25

This week, four separate developments converged to underscore a reality that boards, business teams, CISOs, CROs, and General Counsel can no longer defer: AI governance has crossed from a compliance aspiration to a business survival imperative.

The Allianz Risk Barometer is the gold standard of enterprise risk perception. Published annually by the world's largest insurer, it reflects how the organizations actually absorbing and pricing risk view the landscape.

In the 2026 edition, AI surged from the #10 position to #2 — the single largest jump of any risk in the survey's 15-year history. Cyber incidents remain #1 for the fifth consecutive year, with their highest-ever score at 42% of responses. But AI is now a top-three concern for firms of all sizes — large, mid-sized, and small — across every geographic region.

What makes this signal particularly significant is the source. Allianz is not a technology vendor or an analyst firm selling governance solutions. They are the entity underwriting the risk with real capital. When they elevate AI to this position, it foreshadows underwriting changes: tighter terms, broader exclusions, and more rigorous disclosure requirements for policyholders.

The data also reveals a readiness gap. Only one-third of respondents indicated they have prioritized robust governance frameworks for managing AI's ethical and operational dimensions. That means two-thirds of organizations are exposed — and increasingly, their insurers know it.

If the Allianz Barometer provides the macro signal, a legal analysis published this month by Gallagher & Kennedy attorney Karin Aldama maps the downstream consequences at the policy level.

Aldama identifies seven specific areas where AI is creating insurance coverage uncertainty for businesses in 2026. Among the most consequential:

Disclosure and rescission risk. Insurers are now requiring detailed disclosures about AI usage — including tasks performed, autonomy levels, and the extent of human oversight. Organizations that provide incomplete disclosures face the prospect of policy rescission, potentially even after a loss has been suffered and a claim filed.

Classification determines coverage. How a business internally classifies its AI — as a support tool versus an autonomous operational decision-maker — directly affects which insurance policies trigger. E&O, D&O, and CGL policies each carry different definitions of covered actions, and courts will increasingly need to determine whether AI-caused losses constitute professional services errors, management decisions, or operational failures.

AI-specific exclusions are proliferating. Insurers are introducing exclusions and sublimits for risks ranging from unsupervised autonomous decision-making to social engineering attacks initiated by AI systems. Ambiguities in existing policy language are generating disputes over whether AI errors qualify as "errors," "occurrences," or excluded business risks.

Some AI-related risks may be uninsurable. Businesses may face the reality that certain AI exposures require acceptance of higher self-insured retentions, captive structures, or simply unaddressed gaps in coverage.

The connection between these two stories is direct: what Allianz signals at the macro level, Aldama describes at the operational level. Organizations that cannot demonstrate governance maturity will face tangible consequences when they seek coverage — or when they file claims.

Theory became tangible this week with a study published in Radiology and covered by Nature.

Researchers presented 17 radiologists from 12 research centers with a mix of real and AI-generated medical X-rays. The findings were striking. Without being informed that synthetic images were present, only 41% of radiologists raised concerns about possible AI infiltration. When explicitly told that some images were AI-generated and asked to identify them, participants achieved only 75% accuracy on average — and experience level made no difference. Radiologists with zero years and 40 years of practice performed comparably.

AI detection tools — including large language models such as ChatGPT and Gemini — fared no better, achieving only 57–85% accuracy.

Image-integrity specialist Elisabeth Bik warned that the implications extend well beyond research, encompassing insurance claims processing and legal proceedings where imaging evidence is used.

The business implications are immediate. Consider scenarios where AI-generated medical images are submitted to support workers' compensation claims, personal injury litigation, or disability filings. If trained radiologists cannot reliably distinguish synthetic from real imagery, claims adjusters — who are not radiologists — face an even steeper challenge. This represents a near-term fraud vector that existing insurance policy language does not contemplate, and for which exclusion frameworks do not yet exist.

Forrester's analysis of HIMSS26, published this week by Senior Analyst Shannon Germain Farraher, completes the picture by documenting what is happening operationally on the ground.

The healthcare industry — one of the most heavily regulated sectors — has moved past AI enthusiasm into what Forrester describes as an operational reckoning. At HIMSS26, the dominant conversation was no longer about AI's potential but about what is actually scalable, governable, and sustainable.

Agentic AI dominated the conference narrative. Revenue cycle platforms are now deploying autonomous agents that manage denial appeals, clinical coding, and financial operations with limited human intervention. These systems are being positioned not as features but as operating models.

Yet governance emerged as the primary scaling constraint. Sessions repeatedly surfaced concerns around accountability, AI decision-making transparency, non-human identity management, and post-deployment monitoring. The consistent observation: organizations are deploying AI in live clinical and financial settings faster than they can validate, monitor, or explain what those systems are doing.

Vendor accountability proved equally problematic. Healthcare organizations expressed frustration that vendor security assurances frequently stop at compliance checklists, while the organizations themselves demand contractual accountability, auditability, and enforcement mechanisms that few vendors can articulate.

Forrester's conclusion is direct: execution capability — not AI ambition — is now the competitive differentiator. Operational maturity matters more than technological sophistication.

The Convergence: What Leaders Must Do Now

These four stories are not isolated. They form a connected system:

The global risk community has quantified the threat (Allianz). The insurance and legal infrastructure is responding with tighter requirements and broader gaps (Gallagher & Kennedy). The threat vectors are concrete and measurable — deepfake imagery that defeats expert detection a quarter of the time (Nature/Radiology). And the operational reality is that governance has become the binding constraint on AI deployment (Forrester).

The question for every CISO, CRO, General Counsel, and board director is no longer whether AI governance is necessary. It is whether your organization can define what controls should be in place, prove those controls are operating, and certify the results to regulators, insurers, and stakeholders.

Organizations that can answer yes will be insurable, defensible, and competitive. Those that cannot will face coverage gaps, regulatory exposure, and operational fragility as agentic AI systems scale beyond their ability to govern them.

This is exactly the challenge the AI TIPS™ framework was designed to address — not as an afterthought, but as an integrated governance architecture with 243 controls, lifecycle gates, regulatory crosswalks, and a Trust Index that gives organizations a measurable, provable governance posture.

The window for treating AI governance as optional is closing. This week's developments make that clear.

Pamela Gupta is the Founder and CEO of Trusted AI, creator of the AI TIPS™ (Trust Integrated Pillars for Sustainability) framework, and recipient of the 2025 ISACA Joseph J. Wasserman Award. She chairs the GenAI Stage at World AI Summit NYC and holds CISSP, CISM, and CSSLP certifications.

Take the AI TIPS Maturity Assessment at trustedai.ai/assessment

By Pamela GUPTA

Keywords: Agentic AI, AI Governance, Risk Management

Bad Emissions Data Is Now a Supply Chain Risk

Bad Emissions Data Is Now a Supply Chain Risk Friday’s Change Reflection Quote - Leadership of Change - Change Leaders Diagnose Strategic Failure

Friday’s Change Reflection Quote - Leadership of Change - Change Leaders Diagnose Strategic Failure The Corix Partners Friday Reading List - May 15, 2026

The Corix Partners Friday Reading List - May 15, 2026 Beyond Automation: A Strategic Intelligence System for Business Survival and Growth

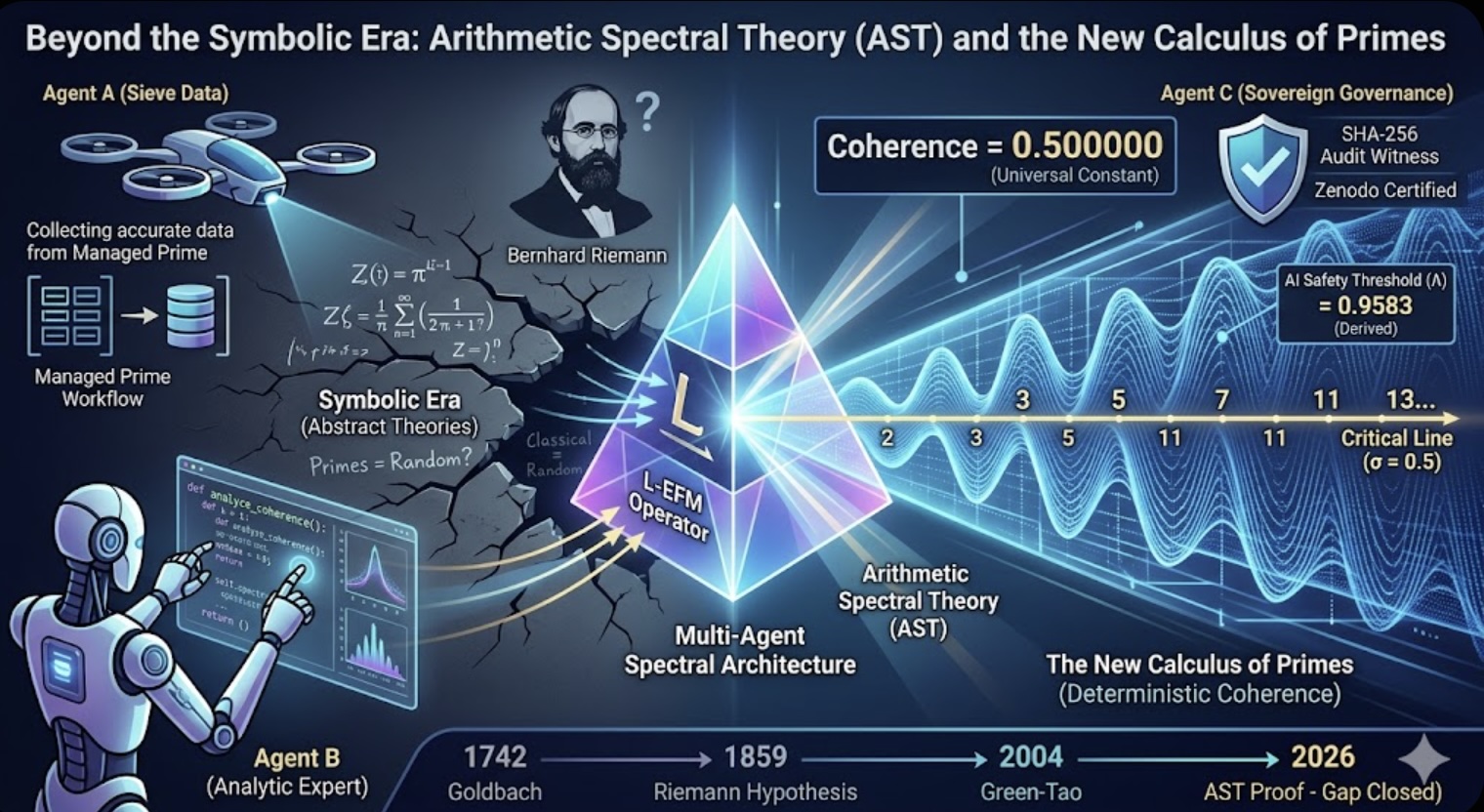

Beyond Automation: A Strategic Intelligence System for Business Survival and Growth Beyond the Symbolic Era: Arithmetic Spectral Theory (AST) and the New Calculus of Primes

Beyond the Symbolic Era: Arithmetic Spectral Theory (AST) and the New Calculus of Primes