May09

For thirty years, we taught humans to think like machines. We rewarded precision over interpretation, output over insight, the answer over the question. The schools followed the incentive. The companies followed the schools. We defunded the liberal arts and called it progress, because progress was measured in productivity, and productivity was measured by how well humans could behave like the machines we hadn't yet built. Now we've built them. And the machines are better at being machines than we ever were.

The skills we spent three decades pushing aside, asking real questions, sitting with ambiguity, reasoning ethically, finding meaning across domains, are the skills that suddenly matter most. The capabilities we treated as impractical are the ones AI cannot replicate. The capabilities we treated as essential are the ones it just automated. Most leaders haven't caught up to that inversion yet. They're still operating like the old skills are the valuable ones. They're handing AI the work that machines do well and quietly losing the work that only humans can do. I call what happens next the Cognitive Rust Belt.

The Industrial Rust Belt happened because people stopped doing the work, then they stopped knowing the work, then they stopped knowing they had stopped knowing. By the time anyone named what was happening, two generations of capability were gone.

The same thing is happening right now, in offices, on laptops, inside the heads of people who would tell you they're getting more done than ever. Judgment thins. Curiosity narrows. Most leaders I work with are already further into this than they realize. Not because they're lazy. Because the market handed them tools and called it fluency. This isn't fluency. It's tourism.

A tourist with a language translator can order dinner and find the train station. They cannot negotiate, argue, create, or lead in a language they never truly learned. That's where most AI users live right now, and the productivity gains are real enough to keep them from noticing. The fix is not better prompts. The fix is rebuilding the thinking muscle that AI is quietly replacing. Not learning to talk to a smart machine. Learning to think with one.

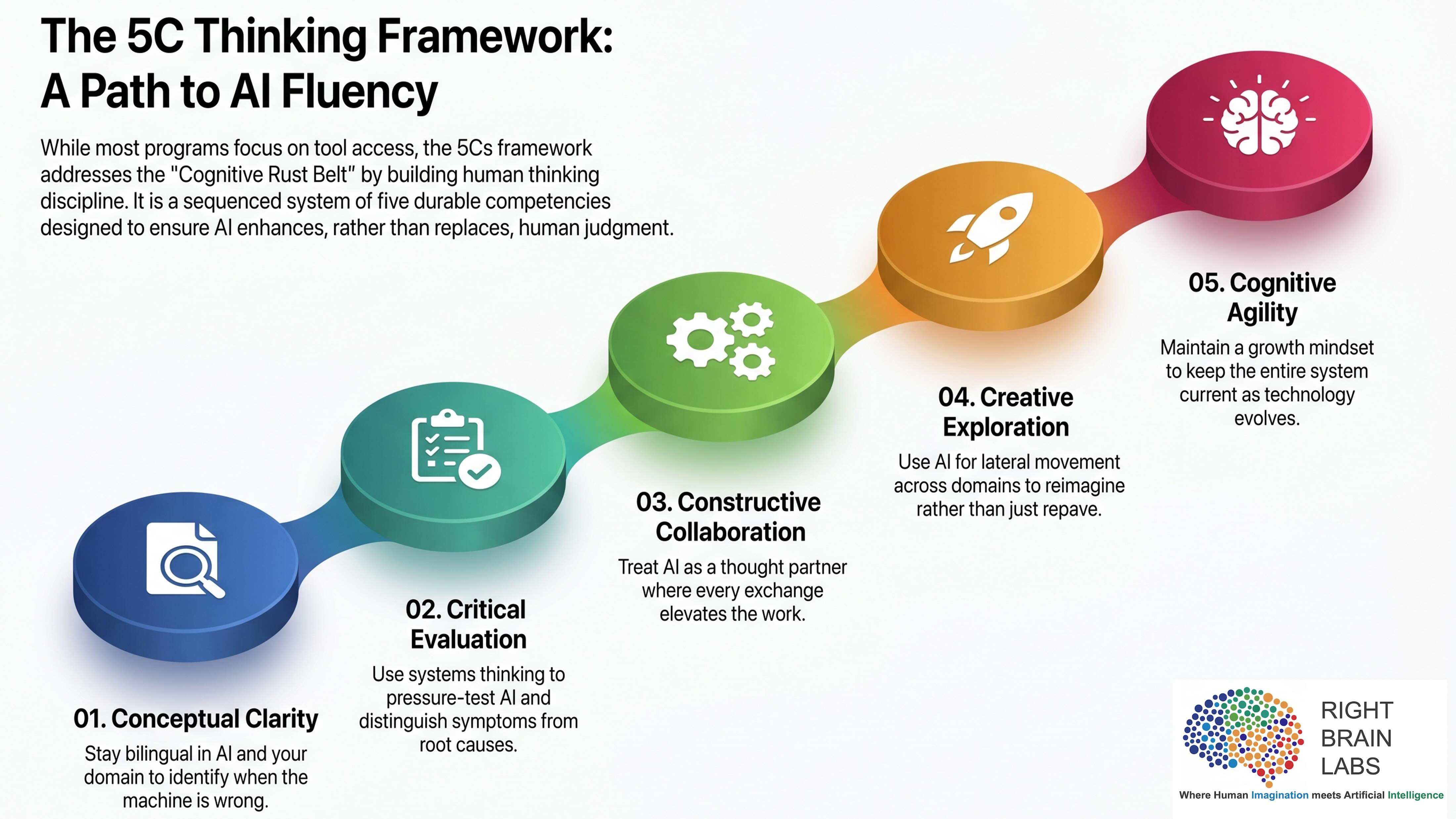

That's the distinction Right Brain Labs is built around. AI Fluency, not AI Literacy. Thinking with AI, not using it. And the practical work of getting there sits in five competencies we call the 5Cs. They're sequenced deliberately.

01. Conceptual Clarity

AI fluency is not a single language. It's bilingualism. You need fluency in AI and fluency in your domain, and the market keeps selling the first as a replacement for the second. It's actually the inverse. The more capable AI gets, the more valuable deep domain expertise becomes, because someone has to be able to feel when the machine is wrong.

A developer who stopped studying algorithms can't tell when AI generates code that compiles and fails in production. A physician who stopped reading the literature can't tell when AI suggests a treatment that reads well and is dangerous. A lawyer who stopped doing the slow work of reasoning through case law can't tell when AI cites a precedent that doesn't actually say what the brief claims it says. The output is plausible. The bluff is what breaks. This is the trap most leaders are walking into right now. They're using AI as the reason to stop developing the underlying expertise, and they're calling that progress. AI didn't make domain mastery obsolete. It made domain bluffing fatal. The fastest way into the Cognitive Rust Belt is to outsource the work that built your judgment in the first place.

Domain expertise at the level AI demands is not surface knowledge. It's First Principles thinking. The load-bearing assumptions of your field. The physics underneath the playbook. The why behind the how. A developer who knows algorithms from First Principles spots AI-generated code that looks correct and violates a fundamental invariant. A physician who knows physiology from First Principles spots AI recommendations that contradict basic mechanism. Surface knowledge passes the AI's surface answer. First Principles knowledge is what survives contact with confident output that's quietly wrong.

Conceptual Clarity is the discipline of staying bilingual at depth. You also develop the second language, AI, well enough to genuinely think in it.

02. Critical Evaluation

AI produces beautiful sentences. That's the problem. The output looks clean. The logic holds together on the surface. The deck arrives on Thursday and nobody asks where the thinking came from. The machine didn't lie. It just answered the question you asked, not the question you should have asked. That distinction requires a human, specifically a human trained to ask the next question.

This is convergent thinking, and it's where AI users most often fail. Convergent thinking is the discipline of narrowing toward what's true. Eliminating the plausible-but-wrong. Pressure-testing the claim until only the load-bearing parts remain. AI is fluent at generating possibilities. It's much weaker at killing the bad ones, because killing requires judgment about what matters, and judgment is yours.

Three traditions live inside this competency, and all three are forms of narrowing. The first is Socratic questioning. Not interrogation. The disciplined act of asking on what basis. What assumption is hidden in this answer. What would have to be true for this to hold. The Greeks built a civilization on this practice. The English major spent four years close-reading texts, finding what the author assumed without saying, arguing with the argument.

The second is convergent thinking as a cognitive mode. The deliberate move from many to one. From options to decision. AI can generate twenty strategies in a minute. Choosing among them is convergent work, and it requires you to hold criteria the machine doesn't have. Your business context. Your team's actual capacity. Your read of the market. The convergence is yours.

The third is ethics. This is where Critical Evaluation stops being a quality control move and becomes a moral one. It's not enough to ask whether AI's answer is true. You have to ask whether it's right. Whether it serves the people it affects. Whether it aligns with what your organization actually stands for, not what's printed on the wall. The leader who delegated the deck to AI also delegated the ethical reasoning that should have shaped the deck. That's the abdication that matters most. Critical Evaluation sits second on purpose. Before you collaborate with AI, you have to know how to challenge it. Every leader who skips this step isn't collaborating. They're delegating. Delegation becomes dependency.

03. Constructive Collaboration

There's a rule in improv every performer learns first. "Yes, and." Not "yes, but." Not "interesting, however." You accept what your partner brings. Then you add to it. The scene goes somewhere neither of you planned, because the building is the point. Most people use AI like a vending machine. Insert prompt, receive output, move on. That's not collaboration. That's extraction. Extraction has a ceiling, because you can only get back what the machine generates from what you gave it. You didn't build anything. You ordered something.

Constructive Collaboration is what happens when you stay in the scene. You bring a problem. AI brings a perspective. You push back. AI refines. You redirect. AI surprises you. The exchange goes somewhere neither party anticipated. That's the difference between getting an answer and building an argument. Between using AI and thinking with it. The word is Constructive, not Creative. Creative implies a solo act with a sophisticated tool. Constructive requires two parties, genuine building, and the discipline to keep saying yes-and even when the scene goes somewhere unexpected. Especially then.

04. Creative Exploration

If Critical Evaluation is convergent thinking, Creative Exploration is its opposite and partner. Divergent thinking. The deliberate widening of the field. The move from one to many, from familiar to unknown, from what you already know to what you haven't yet thought to ask. The best ideas in history rarely came from the deepest expert in the room. They came from the person who wandered in from somewhere else. The physicist who read too much biology. The architect who couldn't stop thinking about how water moves. Lateral thinking has always been the secret engine of breakthrough. The problem is that lateral thinking required accidents. The right book, the right dinner conversation, the right rabbit hole at the right moment. Most people never got lucky enough, so they went deeper into what they already knew. Deeper is safe. Deeper is defensible. Deeper rarely produces the answer nobody else found.

AI changes the structure of this entirely. For the first time, expertise is not the price of entry into a domain. You don't need a PhD in behavioral economics to reason through a pricing problem using behavioral economics. You don't need a background in biomimicry to ask whether the way a forest distributes resources might solve your organizational design challenge. AI is the universal passport. Every domain is now accessible. Divergent thinking, which used to depend on luck and time, now depends on willingness. Most people use AI to go deeper into familiar territory. Same domain, same assumptions, same frame, better output. That's expansion. It has value. It is not exploration.

Creative Exploration asks a different question. Not "how do I get a better answer to the question I already have," but "what would someone from a completely different field even think to ask here." With AI as your thought partner, you can be all of them.

05. Cognitive Agility

The truly bilingual person doesn't translate. They think in both languages simultaneously, switching without friction, using each one for what it does best. Neither language diminishes the other. Both get sharper with use. That's the destination. Not AI literacy. Not even AI fluency as a fixed state. A mind that keeps growing as the technology grows.

Every other competency in this framework can be learned and plateaued. Conceptual Clarity reaches a working level. Critical Evaluation becomes habit. Constructive Collaboration finds its rhythm. Creative Exploration runs out of new territory eventually.

Cognitive Agility has no ceiling because the AI age has no finish line. The models released in 2025 were categorically different from 2023. What arrives in 2027 will make today's tools look primitive. Any framework built on current tools expires with them. Cognitive Agility is what survives every update. It's the Think-Do-Learn-Adapt loop running at full speed, in a human, indefinitely.

It's also the discipline that holds the other four together. Convergent and divergent thinking are useless if you can't tell which mode the moment requires. Bilingual fluency degrades if you stop practicing either language. Ethics calcify if you stop updating them against new capabilities. Cognitive Agility is the meta-skill that keeps the rest current. Stay in the loop. That's the whole game.

What About Context?

Whenever I share this framework, someone asks where Contextual Awareness fits. It's a sharp question and worth answering directly, because it tells me a lot about what the asker is wrestling with. Context isn't missing. It's the substrate every one of the 5Cs already operates in.

Conceptual Clarity is contextual by definition. The whole bilingual claim is that you have to know your domain in context, deeply, before you can tell when AI's answer doesn't fit it. Critical Evaluation is the act of locating an answer inside the context that produced it, and inside the values that should constrain it. Constructive Collaboration builds context across exchanges. Creative Exploration only works when you understand the context you're trying to leave. Cognitive Agility is the discipline of updating your context model as the world updates around you.

What This Actually Means in Practice

The Cognitive Rust Belt isn't coming. It's here. It started forming the moment the first leader looked at a clean AI output and stopped asking the next question. It's spreading every day, in every organization, inside every workflow that has quietly traded human judgment for machine plausibility. The 5Cs are how you build your way out. Not by using AI less. By thinking with it more deliberately.

Keywords: Agentic AI, AI, AI Governance

Advancing Government Innovation: Selected High-Priority Research Initiatives

Advancing Government Innovation: Selected High-Priority Research Initiatives Friday’s Change Reflection Quote - Leadership of Change - Change Leaders Don’t Surrender to Inaction

Friday’s Change Reflection Quote - Leadership of Change - Change Leaders Don’t Surrender to Inaction The Corix Partners Friday Reading List - May 29, 2026

The Corix Partners Friday Reading List - May 29, 2026 You Can’t Build Executives Without First Building Capable Leaders

You Can’t Build Executives Without First Building Capable Leaders Most AI Governance Problems Aren’t Technical

Most AI Governance Problems Aren’t Technical