Jan07

This is a brief post in response to the current focus on the topic. Something longer will follow.

Q: Will quantum computers defeat encryption?

A: Yes, certain types on encryption, including many that are in common use today.

Q: Do we see that as an immediate, existential threat?

A: No. Or, at least we didn’t a few weeks ago as the timeline for suitable quantum computers to be here was “years away”. The recent paper published by researchers in China has raised some interesting questions.

The paper was published a few weeks back but the news got into the mainstream press on 4 Jan and my inbox has been buzzing ever since.

If you haven’t heard about it, this article by Alexander Martin in The Record gives a useful (albeit quite technical) summary, including the reasons experts are sceptical.

In 1994 Peter Shor spiced up the nascent world of quantum computing theory by presenting an approach (now simply referred to as Shor’s Algorithm) that would allow quantum computers to crack certain types of mainstream encryption.

This was important insofar as it was one of the first specific ‘use cases’ for quantum computers. It was also highly theoretical as the hardware required to execute was in the far distant future.

What is important to understand is that just about any encryption can be “decoded” mathematically. It’s just that it takes an awful lot of calculations and, even with the use of today’s strongest super computers, that means then even basic encryption can take years, decades or even millennia to decode. The way quantum computers do certain mathematical calculations makes them millions of times more efficient. However, the only quantum computers currently available are very early versions which people have never thought suited to apply Shor’s Algorithm in an effective manner.

It’s a bit like what we see with data transfer: these days we get frustrated if it takes more than a minute or so to download a two-hour video to our phone or tablet. In the 1990s when I first started travelling for work I was excited to get a 14.4KBps modem download speed. That meant synchronising Lotus Notes (there was not Outlook in those days) could require a full hour if there were even just 3 or 4 modest PowerPoint attachments. This was the norm. When Broadband came along, measured in MBps, things were far far better for email but we still didn’t dream of downloading full videos or music albums in moments.

That is where we are supposed to be now with Quantum Computers. Despite the incredible achievements of the past decade it is still early days. We call it the NISQ era (more here) as in Noise Intermediate-Scale Quantum, because the number of qubits and their quality is low.

This is supposed to be the period we move to detailed ideas of how we use quantum computers in the business world once they are available. (See e.g., the work that people like Esperanza Cuenca Gomez

Therefore the news that researchers have identified how to break a 2048-bit algorithm using a 372-qubit quantum computer is startling. IBM has machines that already have more qubits than that.

It’s important to note that this is still theory as their work was on a 10 qubit device and they have ‘extrapolated’ results, and one thing we know about the quantum domain (thank you Marvel Cinematic Universe and Ant Man!) is that it is far from linear in its behaviour.

Also, the publication hasn’t been peer reviewed, and, while there is plenty of precedent for purposefully false scientific paper publications, as we moved beyond the post-truth world of the last few years it is hard to see why they would chose to publish something that is purposefully wrong. (Though possibly to generate some form of reaction and observe how governments and corporations react.)

The expectation was (still is?) that when quantum computers are several orders of magnitude more powerful than today they will readily crack certain types of code.

This threat is well understood. The timeline isn’t. I recently wrote a piece (not yet published so no link) that touches on the similarities and differences to the Millennium Bug.

Similarity: Real chance of major (existential?) disruption to IT systems and communication

Similarity: If the right steps are taken and the problem avert then all the commentators will say “gosh — all that stress for nothing. What a waste of money!”

Difference: We knew the time line for Y2K. We have no idea for Y2Q.

And when there is no timeline it is far harder to get alignment, to get budgets, to engage the broader set of stakeholders.

Certain actions, such as last month’s signing into law by the US government of the Post-Quantum Cybersecurity Guidelines is helpful for (a) getting headlines and (b) giving CISOs a lever for engaging their colleagues. But fundamentally no-one is excited about the idea of spending large amounts of money changing their encryption structures, and even if they were every infosec professional will remind you that there only needs to be one weakness so we don’t just need to fix what we own but be confident that everything upstream and downstream is also made “Quantum-proof”.

This piece I wrote back in 2021 with Anahita Zardoshti (with input from Emanuele Colonnella) gives you a sense of things from the point of view of an underwriter of security professional.

Calling in the experts

My go-to person on these topics is Professor Michele Mosca. His Quantum Threat Timeline Report is the leading annual view on this topic.

I’m sure he’ll have a word or two to say on the topic and I’m delighted in fact that we have a webinar planned with him in late March. A lot will have happened by then and I look forward to discussing it with him (and comparing to the previous discussions with him in December 2020 and in the Quantum London “Quantum Computing in Insurance” event with Lloyd’s of London in February 2021)

Even if this all turns out to be a misunderstanding, two problems still remain. One is the point above that we need to move all our IT and comms systems to Post-Quantum Encryption and that takes time and money (more of each than we would like).

The second is a clear and present problem that is currently here but not discussed broadly. Namely that some of that data that can be stolen today will still be of value when quantum computers can decrypt it (in 10+ years based on previously presumed timelines). As such we have the perfect ‘tomorrow crime’ where someone does something bad today but the pain only shows up many years hence.

We call this Harvest-today, Decrypt-tomorrow. It had a moment in the daylight in November 2021 after a Booz Allen Hamilton report talked about the concept, but little happened. I suspect this topic will get more attention in the coming weeks now.

What next?

Over the coming days I’m sure we’ll learn more about this. In particular the scalability. There was a general request by the world at the back end of last year for no black swans in 2023. It will be scary but oh so interesting if a huge one arrived in the first week of the year.

By Paolo Cuomo

Keywords: Cybersecurity, Emerging Technology, Quantum Computing

9 cose che ho imparato nella culla dell’intelligenza artificiale

9 cose che ho imparato nella culla dell’intelligenza artificiale The Corix Partners Friday Reading List - June 5, 2026

The Corix Partners Friday Reading List - June 5, 2026 Friday’s Change Reflection Quote - Leadership of Change - Change Leaders Enable Institutional Capability

Friday’s Change Reflection Quote - Leadership of Change - Change Leaders Enable Institutional Capability The Difference Between Executive Success and CEO Readiness

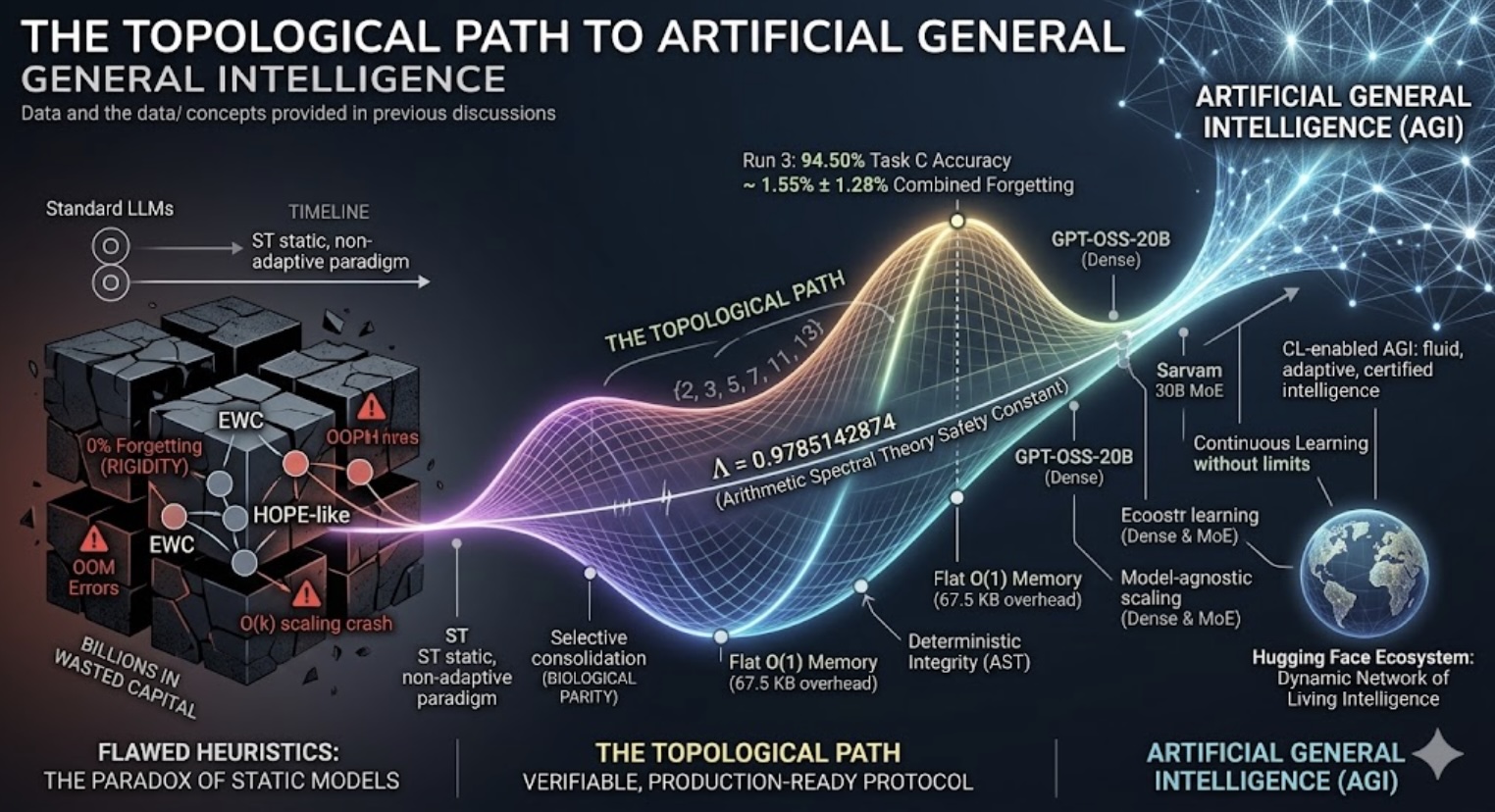

The Difference Between Executive Success and CEO Readiness The Topological Path to Artificial General Intelligence

The Topological Path to Artificial General Intelligence