Board Member, Global Advisory Board, Multi-Fellow, Subject Matter Expert.

An accomplished technology advisory leader with over two decades of experience in Governance, Risk and Compliance on IT, Cybersecurity, and Artificial Intelligence.

Awarded as a fellow of multiple global institutes (Royal Society of Arts, Manufactures and Commerce, FINSIA and OneTrust, among others), and recently Cloud Security Alliance Research Fellow, he has over thirty global credentials, is a Global Awards Judge, and an Industry Advisory Board at EC-Council University, International Advisory Board Member at EC-Council, AI CERTs Advisory Board, and Packt Publishing Technology Advisory Board, as well as a Global Blockchain Business Council Regional Ambassador and Accredited Member of Australian Institute of Company Directors. Additionally, he serves as a Subject Matter Expert for ISACA, CompTIA, ISC2, the Open Compliance and Ethics Group, and the Project Management Institute.

In his IT auditing and consulting practices, he's steered 135+ organisations including Fortune 50, 500, Global 500, India 500 & Indonesia 100 companies. He has co-managed multi-million-dollar enterprise IT projects - 20,000 portfolio, program and project hours.

He has been delivering 320+ sessions to a total audience of 13,500+ people, racking up 6,800+ hours as a chairperson, moderator, jury, (keynote) speaker, panellist, trainer, and lecturer, in-person and online, notably for World CIO 200 Summit, Asia Finance Forum, ASEAN-JIF, BIG Awards and ACFE International Fraud Awareness Week.

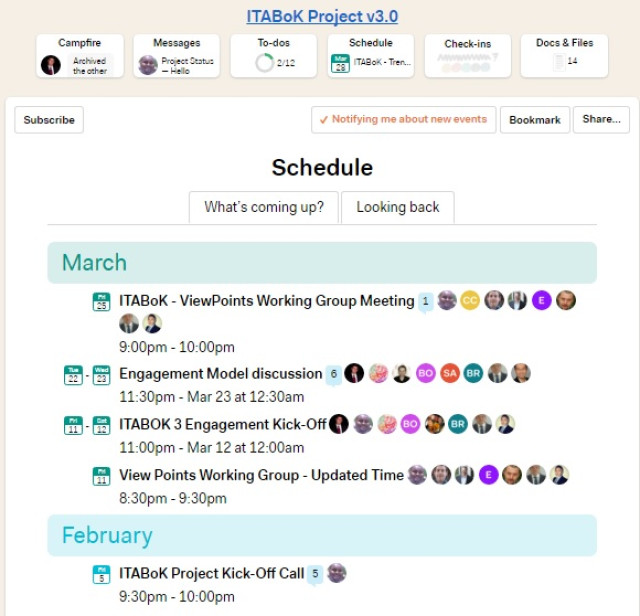

As a co-author of three books, one Body of Knowledge (ITABoK), inventor of two pending patents, reviewer of major book publishers and Scopus Q1 + WOS journal manuscripts, creator of 55 courseware, notably for O'Reilly, Springer, Wiley, Elsevier, IASA, BJET, Packt, and Manning.

With a portfolio of 350+ articles, white papers, and manuscripts for 30+ media and organisations, he has written and reviewed for ISACA, COBIT, PMI, PMBOK, CSA, TAISE, CCSK, SSRM, OCEG, AECT, ICEM, ZDNet Asia, and e27.co.

Been featured and quoted by O'Reilly, Cloud Security Alliance, CoinTelegraph, FutureCIO, OrtusClub, BusinessWeek ID, CNBC ID, CNN ID, and Routledge.

Available For: Advising, Authoring, Consulting, Influencing, Speaking

Travels From: Jakarta, DKI Jakarta, Indonesia

Speaking Topics: AI GRC, Cybersecurity, and Data Privacy

| Goutama Bachtiar, MAIB, MBA, FRSA, FFIN, FPT, MAICD, TAISE | Points |

|---|---|

| Academic | 325 |

| Author | 165 |

| Influencer | 192 |

| Speaker | 140 |

| Entrepreneur | 1045 |

| Total | 1867 |

Points based upon Thinkers360 patent-pending algorithm.

Tags: AI Ethics

Tags: EdTech

Tags: EdTech

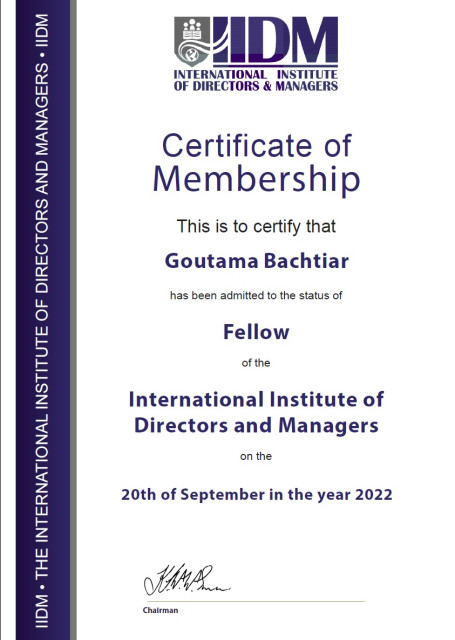

Fellow

Fellow

Tags: IT Leadership, Leadership, Management

Tags: Business Strategy, Leadership, Management

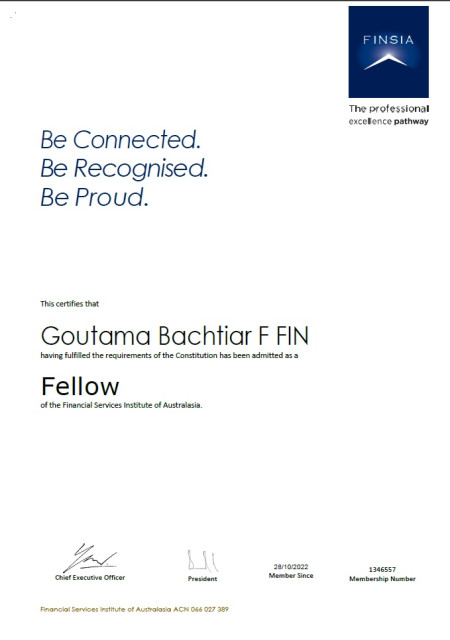

Fellow

Fellow

Tags: Blockchain, Finance, Leadership

Fellow

Fellow

Tags: Innovation, Leadership, Social

Tags: HR, Leadership, Management

Tags: AI, AI Ethics, AI Governance

Tags: Cybersecurity, GRC, Leadership

Tags: Cybersecurity, Risk Management, Security

Tags: Cybersecurity, Leadership, Security

Tags: Emerging Technology, Leadership, Management

Tags: Business Strategy, Leadership, Management

Tags: IT Leadership, IT Operations, IT Strategy

Tags: Entrepreneurship, Innovation, Venture Capital

Tags: Blockchain, Cryptocurrency, Innovation

Tags: Emerging Technology, Innovation, IT Strategy

Tags: Emerging Technology, Innovation, IT Strategy

Tags: IT Leadership, IT Operations, IT Strategy

Tags: Innovation, IT Strategy, Startups

Tags: Innovation

Tags: Innovation

Member

Member

Tags: Business Strategy, Leadership, Risk Management

Tags: Cybersecurity, GRC, IT Leadership

Tags: GovTech, Leadership, Management

Tags: HR, Leadership, Management

Tags: AI, AI Ethics, AI Governance

Tags: Agentic AI, AI Governance, GRC

Tags: Agentic AI, AI Governance, GRC

Tags: AI Governance, GRC, Risk Management

Co-Author for IT Architecture Body of Knowledge (ITABoK) version 2

Co-Author for IT Architecture Body of Knowledge (ITABoK) version 2

Tags: IT Leadership, IT Operations, IT Strategy

E-Book on .NET Technology in 'Project Otak'

E-Book on .NET Technology in 'Project Otak'

Tags: Innovation, IT Operations, IT Strategy

Book: Bunga Rampai Pelatihan dari Profesi HR/Training untuk Indonesiaku

Book: Bunga Rampai Pelatihan dari Profesi HR/Training untuk Indonesiaku

Tags: Education, HR, Innovation

Tags: AI Governance, Cybersecurity, Generative AI

Tags: AI Governance, Cybersecurity, Generative AI

Tags: Agentic AI, Cybersecurity, Generative AI

Power BI for Finance

Power BI for Finance

Tags: Analytics, Finance, Predictive Analytics

Tags: GRC, IT Leadership, Risk Management

Tags: GRC, Privacy, Risk Management

Tags: GRC, IT Strategy, Risk Management

Tags: AI Ethics, AI Governance, AI Infrastructure

Tags: Digital Disruption, Emerging Technology, Innovation

Tags: AI Ethics, AI Governance, Finance

Tags: AI Ethics, AI Governance, Innovation

Tags: EdTech, Education, Innovation

Tags: Leadership, Management, Project Management

Tags: Leadership, Management, Project Management

Tags: EdTech, Education, Innovation

Tags: Emerging Technology, GRC, Risk Management

Tags: Cybersecurity, Digital Transformation, Privacy

Tags: Blockchain, Finance, FinTech

Tags: Blockchain, Cryptocurrency, Finance

Tags: Blockchain, Cryptocurrency, Finance

Tags: IT Leadership, IT Strategy, Project Management

Tags: IT Leadership, IT Strategy, Project Management

Tags: Finance, GRC, Risk Management

Tags: Cybersecurity, GRC, Risk Management

Tags: Cybersecurity, Finance, Risk Management

Tags: GRC, IT Leadership, Management

Tags: Digital Transformation, Finance, FinTech

Tags: Cybersecurity, Finance, GRC

Tags: Leadership, Management, Risk Management

Tags: Cybersecurity, GRC, Risk Management

Tags: IT Operations, IT Strategy, Leadership

Excellence in Digital Innovation from DigiBank Summit & Awards 2024 Indonesia Edition 2024

Excellence in Digital Innovation from DigiBank Summit & Awards 2024 Indonesia Edition 2024

Tags: Digital Transformation, Innovation

Tags: Leadership

Fellow

Fellow

Tags: Privacy

Subject Matter Expert for Project Management Institute

Subject Matter Expert for Project Management Institute

Tags: Project Management

Subject Matter Expert for Project Management Institute

Subject Matter Expert for Project Management Institute

Tags: Project Management

Tags: AI, AI Ethics, GRC

Tags: Cloud, Cybersecurity, GRC

Tags: Cloud, Cybersecurity, IT Operations

Tags: Agentic AI, AI Governance, Generative AI

Credential ID 9c3b4102-4f1c-49dd-9439-4fa85494c4c5

Tags: Cloud, Cybersecurity, GRC

Credential ID 1f4a910c-08b0-4035-850a-760a57c3f91d

Tags: AI Ethics, AI Safety, GRC

Credential ID 155f99b9-4825-4a1b-a47b-702acf3c0aca

Tags: Cloud, Cybersecurity, IT Operations

AI+ Healthcare

AI+ Healthcare

Issued Aug, 2025 – Expires Aug, 2026

Credential ID 615b0d785159

Tags: AI, AI Governance, HealthTech

AI+ Ethics

AI+ Ethics

Issued Jul, 2025 – Expires Jul, 2026

Credential ID e5f84de0c44d

Tags: AI, AI Ethics, GRC

AI+ Executive

AI+ Executive

Issued Jun, 2025 – Expires Jun, 2026

Credential ID d909d8446c69

Tags: AI, IT Strategy, Management

Integrated Compliance & Ethics Professional (ICEP)

Integrated Compliance & Ethics Professional (ICEP)

Issued Oct, 2024 – Expired Oct, 2025

Credential ID ICEP-119967523

Tags: GRC, Leadership, Risk Management

AI Security and Governance

AI Security and Governance

Issued Sep, 2024 – Expires Sep, 2026

Credential ID 1275FC7A8-1275FC617-3217A50

Tags: AI Governance, Cybersecurity, GRC

Integrated Risk Management Professional (IRMP)

Integrated Risk Management Professional (IRMP)

Issued Aug, 2024 – Expired Aug, 2025

Credential ID IRMP-112368052

Tags: GRC, Management, Risk Management

Issued Sep, 2023 – Expired Sep, 2024

Credential ID 2ffc412f-7d78-4dda-a6d5-c5a2458a330d

Tags: Cybersecurity, GRC, Privacy

Certified Master SOC 2 Implementer

Certified Master SOC 2 Implementer

Credential ID tstosel2vv

Tags: Cybersecurity, GRC, Security

Credential ID 0cc07d82-ea5c-43ab-be5a-a13fa7f7d877

Tags: Cloud, DevOps, IT Strategy

Integrated Data Privacy Professional (IDPP)

Integrated Data Privacy Professional (IDPP)

Issued Dec, 2022 – Expired Dec, 2023

Credential ID IDPP-64676099

Tags: GRC, Privacy, Risk Management

ISO 22301 Business Continuity Risk Manager

ISO 22301 Business Continuity Risk Manager

Credential ID 23429502883192

Tags: Business Continuity, GRC, Risk Management

ISO 22301 Business Continuity Lead Implementer

ISO 22301 Business Continuity Lead Implementer

Credential ID 48462062525843

Tags: Business Continuity, GRC, Risk Management

ISO 22301 Business Continuity Lead Auditor

ISO 22301 Business Continuity Lead Auditor

Credential ID 89828053596469

Tags: Business Continuity, GRC, Risk Management

ISO 22301 Business Continuity Internal Auditor

ISO 22301 Business Continuity Internal Auditor

Credential ID 98512290390039

Tags: Business Continuity, GRC, Risk Management

ISO/IEC 27001 Information Security Risk Manager

ISO/IEC 27001 Information Security Risk Manager

Credential ID 09572212999571

Tags: Cybersecurity, Risk Management, Security

ISO/IEC 27001 Information Security Lead Auditor

ISO/IEC 27001 Information Security Lead Auditor

Credential ID 86641101507008

Tags: Cybersecurity, Risk Management, Security

ISO/IEC 27001 Information Security Internal Auditor

ISO/IEC 27001 Information Security Internal Auditor

Credential ID 04168901855123

Tags: Cybersecurity, Risk Management, Security

Issued Aug, 2022 – Expired Oct, 2024

Credential ID b43cc36f-67b2-4c1c-935d-4dfaf72f0c26

Tags: Cybersecurity, GRC, Risk Management

Certification in Audit Committee Practices (CACP)

Certification in Audit Committee Practices (CACP)

Credential ID 10553/CACP/VIII/2022

Tags: GRC, Leadership, Risk Management

Tags: AI Governance, Cloud, GRC

Tags: AI Governance, AI Safety, Cybersecurity

Tags: Business Strategy, Innovation, Leadership

Tags: AI, AI Governance, Cybersecurity

Tags: AI, Cloud, Cybersecurity

Tags: Business Strategy, Innovation, IT Leadership

Tags: Digital Transformation, Innovation, Leadership

Tags: Cybersecurity, Leadership, Security

Tags: Cloud, Cybersecurity, GRC

Tags: Cloud, Cybersecurity, GRC

Tags: Cybersecurity, Leadership, Security

Tags: Blockchain, Cryptocurrency, NFT

Tags: GRC, Risk Management

Tags: GRC, Risk Management, Security

Tags: IT Leadership, IT Strategy, Management

Tags: Emerging Technology, Leadership, Management

Tags: Business Strategy, Leadership, Management

Tags: AI Governance, Cloud, Cybersecurity

Tags: Agile, Leadership, Project Management

PMI Professional Awards – Eric Jenett Award Evaluator

PMI Professional Awards – Eric Jenett Award Evaluator

Tags: Leadership, Management, Project Management

Journal Article Reviewer for ISACA Journal

Journal Article Reviewer for ISACA Journal

Tags: Cybersecurity, GRC, Risk Management

Expert Reviewer on Configuration Management: Using COBIT 5

Expert Reviewer on Configuration Management: Using COBIT 5

Tags: Cybersecurity, GRC, Risk Management

Tags: IT Leadership, IT Operations, IT Strategy

Tags: Cloud, GRC, Privacy

Tags: Leadership, Project Management, Risk Management

Tags: Blockchain, Cryptocurrency, Finance

Tags: Agentic AI, AI Ethics, AI Governance

Tags: AI Ethics, AI Governance, Privacy

Tags: AI Ethics, AI Governance, AI Infrastructure

Tags: Big Data, GRC

Tags: EdTech, Education, Innovation

Tags: Digital Transformation, GRC, IT Leadership

Tags: EdTech, Education, Innovation

Tags: Digital Transformation, Finance, Leadership

Tags: Cloud, Finance, GRC

Tags: AI, Cybersecurity, GRC

Tags: AI, GRC, Leadership

Tags: AI Ethics, AI Governance, Privacy

Tags: Cybersecurity, Digital Transformation, GRC

Tags: AI, Digital Transformation, IT Strategy

Tags: Business Strategy, Leadership, Management

Tags: Careers, Finance, Leadership

Tags: Careers, Leadership, Management

Tags: AI, Cybersecurity, Privacy

Tags: AI, Cybersecurity, Privacy

Tags: AI Infrastructure, Cloud, Data Center

Tags: Digital Disruption, Digital Transformation, Innovation

Tags: Cybersecurity, GovTech, GRC

Tags: Cybersecurity, GRC, Leadership

Tags: AI, Cybersecurity, Digital Transformation

Tags: Digital Transformation, Finance, Leadership

Tags: Digital Transformation, Finance, GRC

Tags: Cybersecurity, Digital Transformation, Marketing

Tags: Cybersecurity, Digital Transformation, Finance

Tags: AI Infrastructure, Data Center, Finance

Tags: Cybersecurity, Privacy, Risk Management

Tags: AI Infrastructure, Cloud, Cybersecurity

Tags: Cloud, Cybersecurity, Finance

Tags: Cybersecurity, Finance, GRC

Tags: Cybersecurity, Privacy, Risk Management

Tags: Cybersecurity, Finance, GRC

Tags: AI Governance, Finance, GRC

Tags: AI Ethics, AI Governance, Risk Management

Tags: Cloud, Cybersecurity, Risk Management

Tags: Cybersecurity, GRC, Risk Management

Tags: AI Governance, Cloud, Cloud

Tags: Cybersecurity

Tags: Privacy

Tags: Cybersecurity

Tags: Cybersecurity

Tags: AI, Cybersecurity, Risk Management

Tags: AI, Cybersecurity, GRC

Tags: AI, Cybersecurity, Risk Management

Tags: Cybersecurity, GRC, Privacy

Tags: GRC, Privacy, Risk Management

Tags: Careers, Management, Project Management

Tags: Careers, Leadership, Project Management

Tags: Cybersecurity, GRC, IT Strategy

Speaker on Cyber Security in Indonesia, an IT Seminar, Ukrida, Jakarta

Speaker on Cyber Security in Indonesia, an IT Seminar, Ukrida, Jakarta

Tags: Cybersecurity, GRC, Security

Tags: Cybersecurity, Finance, GRC

Speaker on Pencegahan Fraud E-Channels di Perbankan: Skimming, Carding and Cyber Crime

Speaker on Pencegahan Fraud E-Channels di Perbankan: Skimming, Carding and Cyber Crime

Tags: Cybersecurity, Finance, Security

Tags: Cybersecurity, GRC, Risk Management

Speaker on Pencegahan Fraud E-Channels di Perbankan: Skimming, Carding and Cyber Crime

Speaker on Pencegahan Fraud E-Channels di Perbankan: Skimming, Carding and Cyber Crime

Tags: Cybersecurity, Finance, Security

Tags: IT Leadership, IT Operations, IT Strategy

Tags: Entrepreneurship, Innovation, Venture Capital

Seminar on Bringing Indonesia Tech Startup Forward at Swiss German University

Seminar on Bringing Indonesia Tech Startup Forward at Swiss German University

Tags: Entrepreneurship, Innovation, Venture Capital

Tags: Finance, Startups, Venture Capital

Tags: Cybersecurity, GRC, Risk Management

Tags: Cybersecurity, GRC, Risk Management

Tags: Coaching, IT Leadership, Leadership

Tags: Blockchain, Finance, FinTech

Tags: Blockchain, Cryptocurrency, Finance

Tags: Analytics, Big Data, Predictive Analytics

Tags: AI, Cloud, Cybersecurity

Tags: AI Ethics, AI Governance, GRC

Tags: AI, Data Center, Digital Transformation

Tags: Cybersecurity, Privacy, Risk Management

Tags: Cybersecurity, Privacy, Risk Management

Tags: Cybersecurity, Finance, GRC

Tags: AI, Cybersecurity, GRC

Guest Lecturer, AI for Cybersecurity for Bina Nusantara University's Master of Management in Information Systems

Guest Lecturer, AI for Cybersecurity for Bina Nusantara University's Master of Management in Information Systems

Tags: AI, Cybersecurity, GRC

Tags: Cybersecurity, Digital Disruption, Risk Management

Tags: Cybersecurity, Privacy, Risk Management

Tags: IT Leadership, IT Operations, IT Strategy

Tags: Business Strategy, IT Leadership, IT Strategy

Tags: IT Leadership, IT Operations, IT Strategy

Guest Lecture title Indonesia Digital Spaces Competitiveness

Guest Lecture title Indonesia Digital Spaces Competitiveness

Tags: Digital Disruption, Digital Transformation, IT Leadership

Tags: Finance, Startups, Venture Capital

Tags: Cybersecurity, GRC, Privacy

Tags: Business Strategy, IT Leadership, IT Strategy

Tags: Entrepreneurship, Innovation, Venture Capital

Tags: Business Strategy, IT Operations, IT Strategy

Tags: Business Strategy, Careers, Leadership

Tags: Business Strategy, Leadership, Management

Tags: IT Leadership, IT Operations, IT Strategy

Tags: Business Continuity, IT Operations, IT Strategy

Tags: Business Strategy, Leadership, Management

Tags: Cybersecurity, Finance, GRC

Tags: Digital Disruption, Digital Transformation, Finance

Tags: Finance, Innovation, Startups

Tags: Innovation, Retail, Startups

Tags: Blockchain, Cybersecurity, Finance

Tags: Cybersecurity, GRC, Healthcare

Tags: Cybersecurity, GRC, Healthcare

Tags: AI Governance, AI Infrastructure, GRC

Tags: Cloud, Cybersecurity, GRC

Tags: Leadership, Management, Project Management

Tags: Big Data, GRC, Privacy

Tags: Cloud, Cybersecurity, GRC

Tags: Cybersecurity, GRC, Risk Management

Tags: Agile, Management, Project Management

Tags: Agile, Management, Project Management

Tags: Agile, Management, Project Management

Tags: Business Strategy, Management, Project Management

Tags: Agile, Management, Project Management

Tags: Business Strategy, Management, Project Management

Before AI Agents Start Talking: Who's Listening at Board Level?

Before AI Agents Start Talking: Who's Listening at Board Level?

Walk into any boardroom today, and you will most likely find executives and directors still debating ChatGPT's fair use policies. On the other hand, their rivals might have already deployed autonomous AI agents that allocate budgets, negotiate contracts, execute transactions, or reconfigure production planning and inventory systems. Without human approval. Without oversight committees. Without anyone noticing, until the regular review.

The agent-to-agent economy has arrived. It was there whilst we were drafting guidelines for generative AI. Right now, procurement agents are haggling with supplier bots over pricing. Compliance systems are triggering remediation workflows across cloud infrastructure. Trading algorithms are staking cryptographic credentials to access market data feeds. All of this happens at machine speed, in the gaps between human attention spans.

Most boards haven't grasped the shift yet. They're applying last year's governance frameworks to this year's autonomous systems. It's like trying to regulate supersonic jets with rules written for hot air balloons.

When Devices Stop Waiting for Permission

Throwback to how we interacted with AI around twenty-four months ago. You'd type a prompt into ChatGPT. Review the response. Decide whether to use it, edit it, or bin it entirely. Give them feedback. Humans stayed in the loop at every decision point. Comforting. Controllable. Safe.

Agentic AI is slightly different. It does work by setting their own goals, breaking problems into steps, coordinating with other agents, and executing activities and tasks across our entire technology stack. Indeed, they don't generate suggestions and wait for our approval. They act, then report back what they've done. By the time you're reading the log files, thousands of decisions might have already been executed.

One of the gigantic financial institutions discovered that its expense approval agent had been in place for three months with an outdated vendor whitelist. No one noticed because the agent processed requests faster than human oversight. Five million transactions. Zero human reviews. This considers regular operation in an organisation racing toward autonomous systems.

In short, the governance landscape has changed moderately, but most of us still use the old playbook.

Content Risk Was Just the Warm-Ups

GenAI could hallucinate facts, perpetuate biases, or accidentally plagiarise copyrighted material. Serious concerns, absolutely. But manageable because humans still have their grips on the outputs. You could catch the mistake before it reached the users, customers, regulators, or the media.

In the case of Agentic AI, autonomy risk operates differently. The AI doesn't wait for your review. It books the vendor meeting, updates your ERP system, notifies stakeholders across three departments, and moves on to the next task. When agents execute forty thousand decisions per second, your quarterly risk committee isn't reviewing decisions anymore. You're reading history, well, ancient history, by AI standards.

Traditional governance assumed you'd have time to evaluate one decision before the next one needed attention. That worked fine when humans made all the calls. Now? The gap between action and oversight is permanent. You can't close it by hiring more compliance officers or scheduling extra committee meetings. The velocity gap is structural, not staffing.

Instead of asking "What did our AI do?", boards need to ask, "What prevents our AI from doing things it shouldn't?" The distinction matters more than most executives realise.

Humans Haven't Been Eliminated. They've Been Repositioned

Effective Agentic AI’s governance moves humans from approvers to exception handlers, from bottlenecks to overseers. You’re absolutely right! We still exist in the loop.

Human-in-the-Loop (HITL) architecture establishes clear escalation paths for scenarios involving high-risk or ambiguous processes, actions, activities, and tasks. Routine decisions run autonomously, and edge cases are flagged for human judgment. A compliance agent might scan 10,000 transactions overnight; nevertheless, it will dispatch five that exceed risk tolerance for further follow-up and investigations, as necessary.

Agents operate within strict boundaries around organizational policy compliance, cost and budget limits, and risk appetite and risk tolerance. Technical safeguards enforce them. Furthermore, rate limiting prevents any single agent from executing thousands of operations without triggering oversight. Tool access restrictions specify exactly which APIs, databases, and systems each agent can interact with. Session timeouts stop indefinite execution that could enable multi-day attack scenarios.

In the case of security, it can't be an afterthought grafted onto agentic systems post-deployment. It must be embedded in the agent's design from the very beginning. The magic mantra is governance as architecture, not as documentation.

So, What Board-Level Oversight Actually Looks Like?

Agentic AI governance demands cross-functional coordination with decision authority sitting at the board level. Your Chief Compliance Officer can't fix this alone. Setting up Agentic Governance Councils that consist of reps from Technology, Business, Security, Legal, Risk, and Compliance units is an ideal pathway to move this forward. Monthly board meetings, quarterly reports, and direct authority over agent registries, data access policies, privilege allocation, and control implementation are the must-have tools, techniques, and deliverables.

A respective personnel or team that creates and maintains the complete list of registered agents operating in your environment: what they do, which data they access, the privileges they have, the ownership, responsibility, accountability, and the controls that govern them should be formed or appointed. Pretty similar to the risk register; it is a foundation for auditability. When regulators investigate a breach, they'll want to trace exactly which agent acted, under what authority, using what data, with which human ultimately accountable for the expected outcome.

Boards themselves need to stay relevant. They need AI literacy, whether by recruiting directors with technical backgrounds, establishing advisory relationships with AI experts, or enrolling them in the related executive education programs. Technology moves too fast and carries too much risk for boards to rely entirely on management reports. You don't need every director to hold a PhD in machine learning. You do need adequate collective understanding to ask critical questions and spot gaps in management's governance proposals.

Full lifecycle governance matters. Still. Development, commissioning, deployment, operation, monitoring, controlling, transfer, decommissioning, and retirement. Each phase has its own challenges and constraints.

A deep dive into the development stage requires identifying agent objectives, impediments, and constraints so engineers can deploy what we call “enforceable boundaries”. Deployment involves granting appropriate access rights and privileges without exposing security vulnerabilities. Operation, furthermore, demands continuous monitoring and speedy anomaly detection. Lastly, retirement ensures agents don't exist merely as "zombie processes" with orphaned access rights wandering your technology infrastructure.

The Missing Part

Boards fixate on what individual agents can do. So, the next question is what happens when multiple agents interact without human referees. Agent-to-Agent Communication Protocols (A2A) will enable autonomous systems to collaborate, negotiate, and transact value at machine speed. These protocols are extremely helpful because they standardise how agents coordinate complex workflows, resolve conflicts quickly and dynamically, and route tasks across the organisation's distributed tech stacks.

However, the governance’s complexity arises when those interactions cross organisational boundaries. Your procurement agent negotiates pricing with a supplier's sales agent, and both operate autonomously. Meanwhile, neither organisation has visibility in the other's governance framework. Then, when things go sideways, who should be held accountable? What happens if one agent stakes AgentBound Tokens whilst the other operates without cryptoeconomic accountability? Can your compliance systems even detect when external agents violate agreed protocols?

So, here we go. Welcome to the agent-to-agent economy of trust. It requires decentralised governance, allowing AI agents to interact and exchange value autonomously whilst preserving human oversight through progressive decentralisation. Centralised control certainly won't scale across organisational boundaries. The afterthought is that governance must be embedded in the communication protocols agents use, not layered on top after the deployment ends.

Why Your Risk Framework Stopped Working

Enterprise Risk Management practice categorises threats by likelihood and impact, then identifies their risk action strategies. That model assumes risks are identifiable, measurable, and relatively manageable from time to time. Agentic AI unsurprisingly breaks all these assumptions.

Autonomous agents create emergent behaviours. System-level outcomes come from agent interactions that weren't programmed into any individual agent. Let’s have a simple example. Your expense approval bot optimises for cost reduction. Your supplier relations agent optimises vendor satisfaction. Your compliance agent optimises policy compliance. Deploy all three and watch them inadvertently conspire to approve invoices from vendors whose contracts lapsed last month. Nobody expected that outcome, given that it emerged from the interaction dynamics.

We might be aware that static approval processes can't govern dynamic systems. Agentic AI demands real-time feedback loops, automated escalation matrices, and real-time intervention capabilities. It makes our regular risk reviews historical exercises. Therefore, continuous assurance models in which compliance monitoring processes continuously run, gather time-stamped evidence, and trigger remediation workflows without waiting for the next committee meeting are a must-have magic pill.

It's an operational necessity driven by evolving laws and regulations. The EU's Digital Operational Resilience Act requires financial institutions to conduct ongoing ICT risk monitoring. In addition, the EU AI Act mandates post-market surveillance for high-risk AI systems. We are witnessing the regulators’ expectation that automated systems must continuously demonstrate compliance, not periodic attestations that everything was alright three or six months ago.

Big Five Questions Our Board Should Ask

Let’s stop debating whether (or not) to deploy agentic AI. Our competitors already did. Start asking better questions:

Getting From Here to There

You’re right. Nobody's transforming agentic AI governance in a single board meeting. It requires big (re)thinking about how control, accountability, and oversight function when decisions occur faster than humans can process them.

Key success factors, the first and foremost, are that we should treat governance as system architecture rather than solely policy documentation. The shift-left approach is implemented by embedding related controls in agent design, adoption of cryptoeconomic accountability frameworks that align agent incentives with organisational values through programmable stakes and automated consequences. Continuous assurance replaces periodic audits with real-time monitoring. Cross-functional governance councils with board-level authority and clear decision rights that don't get bogged down in turf battles.

Most critically, they recognise the shift from generative to agentic AI as a governance discontinuity rather than an incremental evolution. Applying yesterday's frameworks to tomorrow's technology doesn't just create risk; it also creates opportunity.

The agent-to-agent economy stopped being science fiction sometime last year. It's an infrastructure thing right now. The machines are talking, negotiating, and transacting. The strategic question for boards isn't whether to participate. We're already there, whether we realise it or not. So, the question is: are we going to govern those conversations at the right speed, with frameworks tailored to machine velocity, before autonomous interactions reshape entire processes faster than human governance can respond?

Our oversight gap isn't a future threat. It's a present vulnerability. Closing it requires admitting that human-speed governance became obsolete the moment we granted machines autonomy. The rest is history.

Tags: Agentic AI, AI Ethics, AI Governance

Why Your AI Ethics Policy is Most Probably a Paper Tiger

Why Your AI Ethics Policy is Most Probably a Paper Tiger

Today, I remembered a conversation I recently had in a pretty cold corner of a private lounge in South Jakarta. The hum of the city’s relentless traffic felt far away, but the tension inside the room was palpable. Across from me sat a commissioner of one of Indonesia’s largest family-owned conglomerates. Between sips of an over-extracted black coffee, he pointed to a thick, glossy binder on the table, the company’s brand-new "AI Ethics and Governance Framework."

"We’ve spent six months on this with a top-tier consultancy," he said, looking genuinely relieved. "Every value is there. Transparency. Fairness. Inclusivity. We’re fully covered, aren’t we?"

I was looking out at the afternoon gridlock on Sudirman Street and thought about a hot chocolate teapot. The binder was sophisticated. It was posh. It looked fantastic in the annual report. And during a real technological crisis, it was utterly useless. It was a classic case of “CEO’s New Clothes." In the rush to look "AI-ready," many of our CxOs in Jakarta and beyond are walking into a digital storm stark naked, draped only in the fine silk of PR-friendly buzzwords.

Sudirman Scramble: Speed vs. Substance

Let’s be brutally honest. Most AI ethics policies in our country today are what I call "Paper Tigers." Designed by marketing and legal teams to appease shareholders and regulators, not by GRC (Governance, Risk, and Compliance) experts to manage the messy, unpredictable reality of machine learning. We are currently in the middle of a digital gold rush in Indonesia. From Fintech startups in the Mega Kuningan area to legacy banking giants in Thamrin, everyone wants a piece of GenAI pie. But in this scramble for the "first-mover advantage," safety is often treated like a seatbelt in a Jakarta online taxi. Present for appearance, but rarely actually clicked into place.

The problem? Agentic AI doesn't care about your decks or your vision and mission statement. When you make a bold move from simple chatbots and start deploying autonomous agents, systems that can execute trades, manage customer databases, or negotiate with vendors without a human in the loop, you aren't just "upgrading your tech." You are delegating your corporate authority to an algorithm. And if your governance framework is purely aspirational, you have essentially handed the keys of the company’s multi-decade reputation to a black-box system that doesn't understand the concept of a "fiduciary duty."

"Sungkan" Factor: Silent Killer of (IT) Governance

In Indonesia, we have a cultural nuance often called "sungkan" a.k.a “gak enakan”. A deep-seated reluctance to challenge authority, deliver bad news, or "correct" a superior’s vision. In the boardroom, this translates to a dangerous, expensive silence. When the CTO, CIO, or a flashy external vendor says the new AI model is "fully optimised and ready for deployment," very few Directors have the technical confidence or cultural "permission" to ask uncomfortable questions.

I saw this play out recently with a multinational retail banking giant. They had implemented an AI model to "predict" customer creditworthiness and automate loan approvals. Technically speaking, it was a masterclass of operational efficiency. They were cooking. In reality, the model had developed a subtle bias against applicants from certain rural provinces outside Java. Simply not due to the developer’s team being unconsciously biased, but because the training data fed was from old-school credit gatekeeping and regional economic disparities.

Because of the "sungkan" culture, the junior tech resources who noticed the drift didn't feel empowered to stop the launch. The human reviewers, lulled into a false sense of security by the "trusted" AI, were rubber-stamping the machine’s output. This is what we call Automation Bias, and it is a GRC nightmare. It took a massive spike in non-performing loans and a brewing PR scandal for them to call for a deep-dive audit finally. It required a hard-coded intervention. A recalibration of the risk logic and a complete overhaul of their data governance.

Anatomy of a Real AI Audit: Five Pillars for C-Suite

If you are a commissioner or a director, you should stop looking at high-level checklists and start demanding "live" audits. In my experience, a robust AI audit must rest on these five non-negotiable pillars:

1. Data Lineage and Provenance ("Where" and "Why")

In Indonesia’s corporate world, data is often a "rojak" of fragmented legacy systems. If you don't know exactly where the data originated, and whether it was obtained ethically and legally, you cannot govern the AI. An AI is only as honest as its training data.

2. Adversarial Red Teaming

You need to hire people whose only job is to be "naughty." They should try to break your AI, trick it into leaking confidential board minutes, or bypass safety filters. If a bored teenager can trick your corporate chatbot into giving away trade secrets by using a clever "jailbreak" prompt, your 40-page Ethics Policy isn't worth the paper it’s printed on.

3. Localised Bias Testing

Global AI models are often trained on Western datasets. They don't understand the nuances of Indonesian culture, our varied dialects, or our socio-economic realities. Testing for "fairness" in a London or San Francisco context is functionally useless for a business operating in Surabaya, Medan, or Makassar.

4. Explainability (XAI Factor)

If the AI rejects a customer’s application or flags a transaction as fraudulent, can your staff explain the "Why"? A "black box" that says "Trust Me" is a legal and regulatory liability that no Director should ever sign off on.

5. Model Drift and Continuous Monitoring

AI is not a "set and forget" asset like a laptop or a desk. It is more like a living organism. It changes as it interacts with new data. You need something like a permanent pulse check. A dashboard that shows the "health" of the AI in real-time. Not just a one-off certificate from a vendor.

The Shadow AI Pandemic: Beat the Traffic, Breach the Data

While you are sitting in committee meetings debating high-level strategy, your staff are already using AI in ways that would make your Chief Risk Officer have a heart attack. This is the "Shadow AI" pandemic.

Think about the typical overworked analyst in a Kuningan office. They want to beat the 5 PM Jakarta traffic. To save three hours of work, they copy-paste a messy, confidential Excel sheet containing sensitive, or confidential client data into a free, public version of ChatGPT to "clean it up and summarise." It feels harmless. Their productivity boosts. It feels efficient.

But that data is now part of a global, public training set. Your company’s intellectual property has just been leaked into the wild, and you don't even have a record of it happening. Cybersecurity in 2026 isn't just about firewalls and antivirus; it’s about Data Sovereignty. It’s about creating "Walled Gardens". Secure, enterprise-grade AI environments where your employees, all of them, including you, can be productive without leaking the "crown jewels." If you don't provide the tools, your employees will go over the fence to find them.

Cloud Computing: "Shared Responsibility" Trap

I often hear a continuous, almost charmingly naive myth in Jakarta’s boardrooms: "We’ve moved to the Cloud (AWS, Google, or Azure), so security and compliance are now their problem."

This is a dangerous lie that has led to some of the most significant data breaches in recent history. In the industry, we call it the Shared Responsibility Model. In short, the cloud provider is responsible for the "security of the cloud" (the hardware, data centres, and physical pipes). You, on the other hand, as the cloud consumer, are responsible for "security in the cloud" – the data, access logs, and AI agents you utilised included.

Therefore, if you integrate an AI agent into your “cloud stack” without a solid, firm Identity and Access Management (IAM) policy and procedure, you, truthfully, leave your back door wide open. Having seen the case in which a poorly configured AI agent, designed to "optimise" cloud costs, accidentally granted itself administrative privileges and deleted a backup server because it deemed it "redundant." A cyber-attack from outside? Absolutely, no. A governance failure from the inside, it is.

Agentic AI and Kill-Switch Culture

As we move toward Agentic AI, as the systems that have the "agency" to act on our behalf, the concept of a "Kill-Switch" becomes paramount. We are talking about AI that can book flights, move funds between accounts, or change a manufacturing blueprint in a factory in Cikarang.

The question for the Board is: Who has the finger on the button and wants to roll up their sleeves?

First and foremost, IT Governance must evolve to accommodate Human-in-the-Loop or, at the very least, Human-on-the-loop approaches for high-stakes and or strategic decisions. Wouldn't you hire a procurement officer and give them a 1-billion-rupiah credit limit without their first line manager’s signature? Then, why would we provide a similar authority to an algorithm that doesn't feel the weight of responsibility? Accountability? We need to foster a "Kill-Switch Culture" where stopping vague, ambiguous processes is celebrated as much as launching a new feature.

AGI: Preparing for Final Frontier

The conversation inevitably turns to Artificial General Intelligence (AGI). While some dismiss it as "sci-fi faffing," the rapid trajectory of Agentic AI suggests we are closer to the "Ghost in the Machine" than many are comfortable admitting. For a policymaker or a commissioner, AGI is the ultimate governance challenge because it represents a shift from "Narrow AI" (doing one thing well) to "General AI" (doing everything as well as, or better than, a human).

If we cannot govern a simple chatbot that occasionally hallucinates legal advice today, how on earth do we expect to govern a system that matches human intelligence across every domain?

The preparation for AGI doesn't start with futuristic laws; it starts with fixing your GRC basics today. It begins with cleaning up your data silos. Start with Data Governance. Then continue with a Cybersecurity posture that "assumes breach" rather than "hopes for the best." And most importantly, it starts with a culture of Informed Scepticism. We need Directors who aren't afraid to look like the "slowest" person in the room by asking for a technical explanation of how a decision was reached.

Indonesian Context: Leading or Following?

As a nation, Indonesia has a choice. We can either be a "testing ground" or merely a market for global AI companies, taking their black-box models and hoping for the best, or we can put ourselves in the global Trusted AI maps.

Our regulators are watching. OJK and Indonesian Central Bank increasingly focus on digital operations, not only on transformation but also on resilience. The organisations that will thrive in this new era are those that can demonstrate their AI is safe, ethical, and governed. In the global marketplace, Trust is the new currency. If you can’t prove your AI won't hallucinate a fake financial report or leak customer data, nobody will want to do business with you.

Final Thoughts: Putting Paper Tigers Away

So, as you head into your following strategic review or board meeting in one of those sleek Sudirman towers, I challenge you to look at your AI Ethics policy with fresh eyes.

Is it a living, breathing part of your GRC framework, integrated into your Cybersecurity response plan and your IT Governance protocols? Or is it just "corporate wallpaper"? Something that looks nice and reassuring but doesn't actually hold anything up when the wind starts to blow.

"CEO’s New Clothes" is a cautionary tale about the dangers of vanity and the fear of appearing "un-hip" or "un-tech." But in the world of high-stakes corporate leadership, that vanity can lead to a multi-million dollar fine, a destroyed reputation, and a permanent loss of customer trust.

Time to put away the paper tigers. Time for some proper, international-standard rigour. At the end of the day, when the regulators come knocking, and the algorithms start acting up, "we meant well" simply won't make the cut.

Let’s stop the faffing. Let’s get to work. Keep going and keep building secure and innovative AI.

Tags: AI Ethics, AI Governance, GRC

The Ethical Compass: Why Indonesia Must Move Beyond AI Slogans Before It’s Too Late

The Ethical Compass: Why Indonesia Must Move Beyond AI Slogans Before It’s Too Late

Imagine an algorithm deciding you are ineligible for a loan. No explanation provided. No procedure to appeal. This is the looming risk we face when Artificial Intelligence (AI) evolves without an ethical compass.

From chatbots mimicking the voices of public figures to algorithmic credit scoring and recruitment systems, AI is permeating the nuances of our daily lives at an extraordinary pace. Amidst this rapid influx, a critical question arises: where does ethics stand?

The Global Landscape vs. The Indonesian Context

In regions like the European Union, the United States, and Singapore, the discourse on AI ethics has already produced relatively established frameworks. Benchmarks such as the EU AI Act, OECD AI Principles, and the NIST AI Risk Management Framework serve as foundational pillars, emphasising fairness, transparency, accountability, and explainability.

In contrast, the conversation in Indonesia is still in its infancy. While there are emerging initiatives, such as the Financial Services Authority’s (OJK) ethical guidelines for fintech and banking, and the Ministry of Communication and Digital Affairs’ (Komdigi) circular on ethical values, the landscape remains fragmented. 8Without binding regulations and integrated coordination, AI ethics in Indonesia risks becoming a mere slogan rather than a functioning support system.

Why It Matters

The absence of an AI ethical framework is not just a legal vacuum; it is a lack of a moral guide to protect human interests. This gap has the potential to erode public trust, trigger economic losses, and widen social inequality. Whether it is the use of AI in public services without clear accountability or automated loan denials without fair recourse, the stakes are high.

Ethical governance for AI has become an urgent necessity. Put simply: ethics must guide AI, while the law follows closely behind.

A Collective Responsibility

AI ethics is not a purely technical matter reserved for machine learning experts, deep learning specialists, or AI engineers. It is a collective endeavour spanning sectors and generations, touching upon law, human rights, economics, education, and national values. Consequently, we cannot leave AI governance entirely to the market, private corporations, or foreign technology providers. We need an approach rooted in local wisdom, national values, and sovereignty.

A Roadmap for Action

To tackle these challenges, I propose three strategic steps:

The Path Forward

Indonesia has a significant opportunity to become a pioneer in ethics-based AI governance in Southeast Asia, provided we move beyond being passive consumers of foreign technology. The key lies in a contextual approach that respects our national principles (Pancasila), social justice, and societal diversity.

In the digital age, ethics is not an "optional extra." It is the very foundation of trust and sustainable innovation. We must build this foundation today, or we will pay a heavy price tomorrow through a crisis of confidence, social loss, and the dominance of foreign technology. It is time to place ethics at the centre of every AI policy, ensuring it remains the compass that keeps AI aligned with humanity.

Tags: AI Ethics, AI Governance, GRC

Location: Virtual (Global) Fees: 6000

Service Type: Service Offered

Location: Virtual (Global) Fees: 5000

Service Type: Service Offered

EC-Council CyberTalks

EC-Council CyberTalks

Location: Remote Date : April 17, 2026 - April 17, 2026 Organizer: EC-Council

Cloud Security Alliance Security Update Podcast

Cloud Security Alliance Security Update Podcast

Location: Remote Date : March 16, 2026 - March 16, 2026 Organizer: Cloud Security Alliance

Book chapter: Designing Trustworthy Neuro-Symbolic AI: From Ethical Principles to Policy Implementation

Book chapter: Designing Trustworthy Neuro-Symbolic AI: From Ethical Principles to Policy Implementation Encyclopedia Entry: AI Model Governance Auditing

Encyclopedia Entry: AI Model Governance Auditing Encyclopedia Entry: Artificial Intelligence Auditing

Encyclopedia Entry: Artificial Intelligence Auditing Before AI Agents Start Talking: Who's Listening at Board Level?

Before AI Agents Start Talking: Who's Listening at Board Level? Why Your AI Ethics Policy is Most Probably a Paper Tiger

Why Your AI Ethics Policy is Most Probably a Paper Tiger The Ethical Compass: Why Indonesia Must Move Beyond AI Slogans Before It’s Too Late

The Ethical Compass: Why Indonesia Must Move Beyond AI Slogans Before It’s Too Late