The Claude Finance Playbook: Structured Prompts for Investment Banking, Valuation & Deal Strategy

The Claude Finance Playbook: Structured Prompts for Investment Banking, Valuation & Deal Strategy

Stelvin Saji

April 21, 2026

The financial world doesn’t reward effort, it rewards precision, clarity, and speed.

For decades, institutional-grade financial analysis lived behind elite firms, expensive advisors, and high-cost terminals. That barrier is gone.

The Claude Finance Playbook is a practitioner’s system for turning AI into a true financial engine, built for serious operators, not casual users. It delivers structured, high-performance frameworks that replicate the work of investment bankers, consultants, and top-tier analysts in minutes.

Inside are 60+ precision-built frameworks across six core domains:

1. Investment Banking & Valuation: DCF, LBOs, M&A analysis, and full-scale deal narratives.

2. Market Research & Strategy: TAM-SAM-SOM, positioning, pricing, and go-to-market execution.

3. Buffett-Style Stock Analysis: Moats, financial diagnostics, risk, and long-term value assessment.

4. Legal Document Architecture: High-quality agreements and legal frameworks without the premium fees.

5. The $2,000 Terminal Killer: Deep research, technical signals, and macro intelligence at minimal cost.

6. The Sovereign Builder: A complete system for building and scaling a one-person enterprise.

This is not about AI theory. It’s about output, leverage, and execution. The advantage compounds for those who move first.

Also included as part of the Prompt Engineer’s Bible Series.

See publication

Tags: Business Strategy, Finance, Generative AI

The Prompt Engineer's Bible

The Prompt Engineer's Bible

Stelvin Saji

March 12, 2026

Most people are whispering to AI. This series teaches you to command it.

The Prompt Engineer's Bible is the only collection built for those who want to operate at a level most users don't even know exists. Three volumes. Zero fluff. Pure, precision-engineered frameworks that turn AI into the most powerful tool you've ever touched.

This is the series that separates the professionals from the prompt-and-pray crowd.

Whether you're coding at speed, designing without limits, writing content that converts, or positioning yourself as the $300/hour force multiplier every team is desperately hunting for the frameworks inside these pages don't just improve your workflow. They rebuild it from the ground up.

Silicon Valley's best-kept secret isn't talent. It's leverage. Prompt engineering, done at this level, is the purest form of leverage available to anyone with a keyboard and a vision.

The Prompt Engineer's Bible isn't a reading experience. It's an upgrade.

Three books. One mission. Make you dangerous.

See publication

Tags: AI Infrastructure, Careers, DevOps

Mastering AI Engineering: Prompt Frameworks for the Elite Developer: A Guide to Transforming AI into a $300/Hour Silicon Valley Force Multiplier

Mastering AI Engineering: Prompt Frameworks for the Elite Developer: A Guide to Transforming AI into a $300/Hour Silicon Valley Force Multiplier

Stelvin Saji

March 03, 2026

Some developers prompt AI. And some engineers architect with it. This book was built for the second group, the ones who want to operate at 10x output, ship with confidence, and permanently separate themselves from the 95% still typing one-line prompts into a chat box.

See publication

Tags: AI Infrastructure, Generative AI, Startups

Complete Prompt Master Manual: Essential Prompts for Coding, Design, Writing & More

Complete Prompt Master Manual: Essential Prompts for Coding, Design, Writing & More

Stelvin Saji

December 09, 2025

Master the art of AI prompting with 500+ high-value prompts for coding, design, writing, productivity, and more.

The Complete Prompt Master Manual is the all-in-one reference built for professionals, creators, and beginners who want to get consistently powerful results from AI tools like ChatGPT, Gemini, Claude, and Llama. Instead of guessing what to type, you get a proven collection of battle-tested prompts designed to improve accuracy, speed, and creativity across all major fields.

See publication

Tags: AI Infrastructure, AI Orchestration, Open Source

Expressive Emojis: Adding Playful Expressions with HTML, CSS & JS

Expressive Emojis: Adding Playful Expressions with HTML, CSS & JS

Stelvin Saji

July 23, 2024

See publication

Tags: Design, Design Thinking, DevOps

Face Morphing in Images: A Novel Approach Using Aligned Facial Landmarks (v1.5)

Stelvin Saji

March 31, 2026

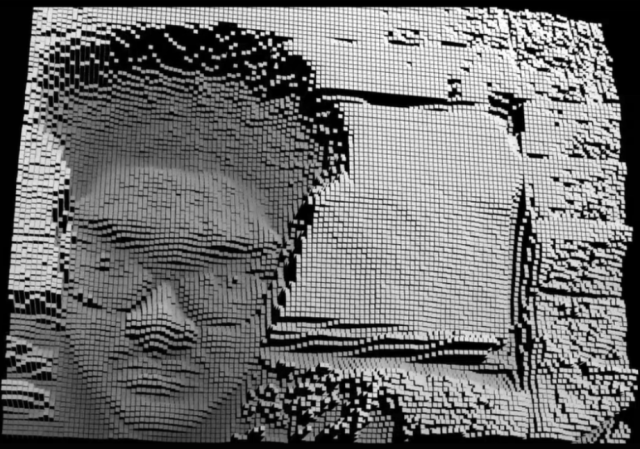

Face morphing in real-time browser environments demands more than visual fidelity; it requires stability under motion, interpretability of neural outputs, and performance that scales reliably across consumer hardware. This work presents MorphAI v1.5, a production-grade upgrade to the animated face-swapping framework introduced in v1, extending its capabilities with a significantly optimized WebGL rendering pipeline, a re-engineered multi-subject tracking architecture, and a comprehensive suite of diagnostic overlays designed to make facial computation fully observable and verifiable.

The system sustains 60 frames per second on modern mobile hardware through deliberate low-level optimizations, including reduced draw calls, efficient buffer management, and intelligent frame synchronization that eliminates jitter and temporal artifacts. Multi-subject detection is handled by a redesigned bounding box system that maintains stable alignment across dynamic scenes involving rapid movement, scale variation, and orientation shifts, ensuring that every morph operation remains spatially coherent and distortion-free.

To advance interpretability, MorphAI v1.5 introduces three diagnostic overlays: a high-density Face Mesh that maps a polygonal topology directly onto detected faces for sub-millimeter tracking precision; a 68-point Landmark system that anchors expression mapping and feature alignment to consistent anatomical reference nodes; and a Heatmap overlay that renders temporal velocity gradients across facial regions, surfacing micro-expressions and motion dynamics imperceptible to the human eye. A Split View Engine further enables side-by-side comparison between source input and neural output, transforming the pipeline from an opaque black-box system into a fully auditable visual environment.

The framework additionally supports seamless export of morphed outputs beyond the live canvas and integrates a keyboard shortcut system for streamlined control in power-user workflows. MorphAI v1.5 is implemented as a browser-native system optimized for consumer-grade hardware and is publicly deployed on Hugging Face. These advancements collectively establish a robust foundation for interactive media, creative visual tooling, and research applications demanding uncompromising performance, precision, and transparency.

See publication

Tags: AI, Privacy, Startups

Investigating Human Silhouettes: A Study of Live Human Pin Art Installations

Stelvin Saji

January 27, 2026

Pin art has progressed from an early mechanical experiment to a recognized form of large-scale participatory visual expression. Its conceptual foundations originate in mid-twentieth-century research on pinscreen animation, a technique that employed dense arrays of movable pins to produce highly detailed, shadow-based imagery through direct physical manipulation. This principle was subsequently realized in an interactive, sculptural format during the 1970s through the work of artist Ward Fleming, who developed the boxed pin art object, a structured grid of displaceable metal pins capable of recording transient three-dimensional impressions of hands, faces, and everyday objects. Following its presentation in experimental exhibitions and later patenting and commercialization, pin art achieved widespread recognition as a tactile and pedagogical medium, becoming a familiar presence in offices, educational institutions, and science museums. Despite variations in scale, materials, and fabrication, its fundamental mechanism has remained consistent: the translation of physical contact into a visible spatial imprint.

More recently, pin art has experienced renewed cultural visibility through its circulation within digital and social media environments. Short-form visual demonstrations, typically characterized by the sudden emergence of a three-dimensional form from an ostensibly flat surface, correspond closely with contemporary modes of algorithmically mediated content consumption. The immediacy of this transformation, together with the perceptual illusion of depth generated through physical displacement, renders pin art particularly effective within short-form video contexts, facilitating broad dissemination and sustained audience engagement across digital platforms.

This study investigates contemporary computational strategies for simulating and extending pin art within digital environments, employing a practice-based research methodology that integrates interactive systems and visualization techniques. By situating pin art within computational frameworks, the research reconceptualizes it as an interdisciplinary practice operating at the intersection of embodied interaction, interactive art, and technological mediation. Within this framework, pin art is examined not as a static artifact, but as an adaptive system capable of supporting collective participation and experiential interpretation

See publication

Tags: Digital Transformation, Education, Innovation

Face Morphing in Images: A Novel Approach Using Aligned Facial Landmarks

Stelvin Saji

November 25, 2025

Face swapping, the process of replacing one individual’s facial identity with another while preserving visual realism, has attracted increasing attention across digital entertainment, augmented reality, creative media, and digital forensics. This work presents an animated face-swapping system for static images that enables continuous and seamless cycling of facial identities among multiple individuals detected within a single frame.

The proposed method employs precise facial landmark detection to compute tightly aligned, rotation-aware bounding boxes that isolate only the inner facial oval, intentionally excluding hair, background regions, and other non-facial artifacts. To achieve visually coherent integration across identities, the pipeline combines color normalization, lighting transfer via homomorphic filtering, and unsharp masking, followed by seamless cloning using OpenCV. Facial transitions are animated through easing functions and temporal interpolation, producing smooth morphing effects rather than abrupt identity replacements.

The system is implemented as a high-performance, React-based web component optimized for execution on consumer-grade hardware. Experimental observations demonstrate improved facial alignment, smoother temporal transitions, and enhanced perceptual realism when compared with baseline static face-swapping techniques. These characteristics make the system suitable for interactive media applications, creative visual tools, and privacy-preserving identity transformation workflows.

See publication

Tags: AI, Privacy, Startups

Olipasto

Olipasto

Stelvin Saji

May 25, 2026

Transform your images into refined oil pastel artwork infused with rich, expressive colors and timeless artistic depth.

See publication

Tags: Creativity, Design, Transformation

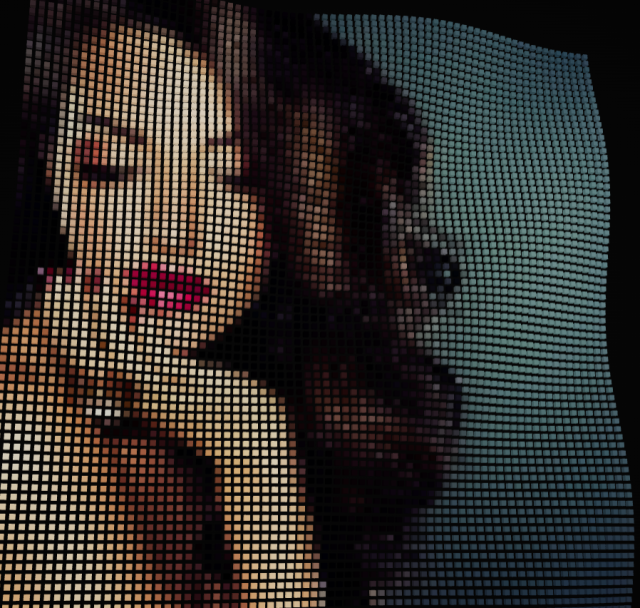

Dither Studios

Dither Studios

Stelvin Saji

May 11, 2026

Transform any image into visually striking, high-quality dither art with a fast, precision-driven rendering engine designed for creators who value both speed and detail. Seamlessly upload your images, fine-tune dithering styles and visual depth with complete control, and export beautifully crafted pixel-based artwork in seconds. From subtle retro textures to bold monochrome compositions, every output is optimized for clarity, artistic accuracy, and a refined digital aesthetic.

See publication

Tags: Creativity, Design, Digital Transformation

Motion Trace: Capturing Movement as Living Memory

Motion Trace: Capturing Movement as Living Memory

Stelvin Saji

April 28, 2026

Motion Trace reimagines how we experience movement, transforming fleeting actions into lasting visual narratives. Through elegant trails and soft echoes, it captures not just where something moved, but how it felt in motion. It blends technology and artistry to reveal patterns, emotion, and rhythm hidden within motion itself.

See publication

Tags: Digital Transformation, Emerging Technology, Generative AI

Bauform Generator - A Generative Bauhaus Pattern System

Bauform Generator - A Generative Bauhaus Pattern System

Stelvin Saji

April 17, 2026

A modern, algorithm-driven design tool that reimagines the timeless principles of Bauhaus through code. Blending geometry, balance, and bold color theory, it generates clean, structured patterns that feel both minimal and expressive. Designed for creatives and technologists alike, it transforms simple inputs into striking visual compositions, bridging art, logic, and interaction in a seamless, contemporary experience.

See publication

Tags: Design Thinking, Digital Transformation, Innovation

Pixeloid 3d - Transform Images into Dynamic Geometric Art

Pixeloid 3d - Transform Images into Dynamic Geometric Art

Stelvin Saji

April 09, 2026

Turn ordinary images into visually striking geometric masterpieces with Pixeloid 3D. This innovative tool reinterprets photos by breaking them down into structured shapes, such as squares, circles, rings, and other dynamic forms, creating a fusion of art and algorithm.

See publication

Tags: Design Thinking, Digital Transformation, Innovation

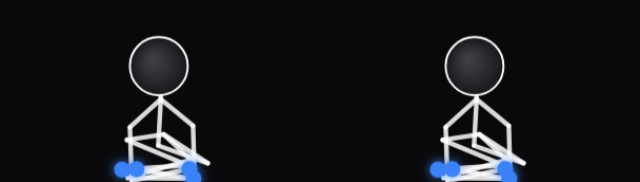

Interactive Ragdoll Physics For Stress Relief

Interactive Ragdoll Physics For Stress Relief

Stelvin Saji

March 19, 2026

A minimalist, physics-driven playground designed for instant stress release, this experience introduces two dynamic ragdoll dummies upon launch, fully responsive, weight-aware, and governed by real-time physics. Users can drag, toss, collide, or freely manipulate them, generating fluid, unpredictable motion that feels both natural and deeply satisfying. Every interaction is powered by constraint-based physics, ensuring lifelike reactions to force, momentum, and impact, whether through gentle movements or chaotic throws. The system responds seamlessly, transforming simple gestures into a calming, tactile experience. Featuring two fully interactive ragdoll dummies with realistic physics behavior, smooth drag-and-throw mechanics, real-time high-performance simulation, and a clean, distraction-free interface.

See publication

Tags: Creativity, Innovation, Mental Health

Ambient Curtain Flow

Ambient Curtain Flow

Stelvin Saji

March 05, 2026

Ambient Curtain Flow is a generative visual system where still imagery is reimagined as a living surface—soft, responsive, and in motion. Each image transforms into a fabric-like field, its edges breathing with subtle variance, its body shaped by simulated wind forces that bend and drift in real time.

See publication

Tags: Creativity, Design, Innovation

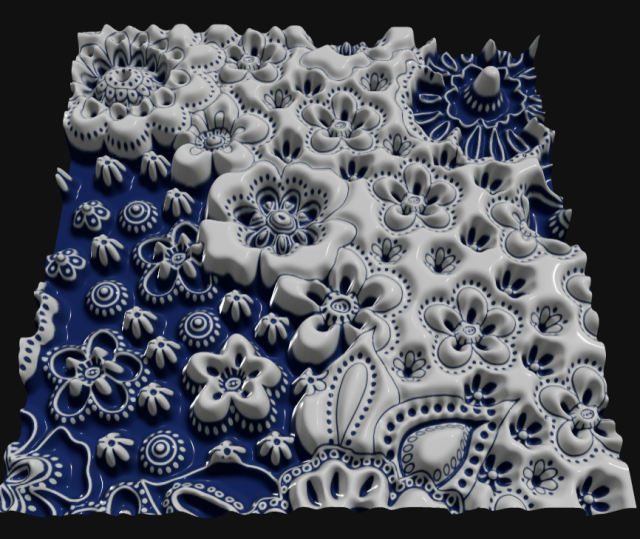

3D Embossing Effect - Embossing that enhances depth, texture, and detail

3D Embossing Effect - Embossing that enhances depth, texture, and detail

Stelvin Saji

February 25, 2026

3D Embossing Effects bring designs to life by adding striking depth, refined texture, and enhanced visual detail. This advanced finishing technique creates a tactile, multi-dimensional surface that elevates both aesthetics and perceived value. Ideal for premium branding and high-impact visuals, delivering a sleek, sophisticated look that captures attention and reinforces a sense of quality craftsmanship.

See publication

Tags: Creativity, Design, Transformation

Jixaw

Jixaw

Stelvin Saji

February 09, 2026

Jixaw enables users to seamlessly upload any image and transform it into dynamic, interactive puzzle experiences. Designed with a sleek and intuitive interface, it allows users to break down visuals into fully customizable puzzle pieces, making it effortless to tailor complexity and structure to suit different needs.

See publication

Tags: Creativity, Education, Innovation

Investigating Human Silhouettes: A Study of Live Human Pin Art Installations

Investigating Human Silhouettes: A Study of Live Human Pin Art Installations

Stelvin Saji

January 27, 2026

Pin art, originally developed through mid-20th-century pinscreen animation and later popularized as an interactive sculptural medium, translates physical contact into dynamic three-dimensional impressions. This project reimagines pin art in a digital environment, using real-time camera input to transform human faces into interactive pin-based visualizations. By combining principles of embodied interaction, creative coding, and computational visualization, it extends traditional pin art beyond its mechanical limitations.

The result is an immersive, participatory experience in which physical presence is instantly converted into a responsive visual form, bridging tactile art and contemporary digital media. This work positions pin art as a modern interactive medium, aligning with evolving trends in short-form visual content and real-time user engagement.

See publication

Tags: AR/VR, Design Thinking, Innovation

ASCII Art Generator

ASCII Art Generator

Stelvin Saji

January 14, 2026

Transform visuals into striking text-based artistry with refined precision. ASCII Art Studio reimagines your images as elegantly structured compositions, preserving depth, contrast, and detail through intelligently mapped characters. It delivers clean, scalable ASCII renderings suitable for digital art, branding, and creative experimentation.

See publication

Tags: Creativity, Digital Transformation, Emerging Technology

The Claude Finance Playbook: Structured Prompts for Investment Banking, Valuation & Deal Strategy

The Claude Finance Playbook: Structured Prompts for Investment Banking, Valuation & Deal Strategy

The Prompt Engineer's Bible

The Prompt Engineer's Bible

Mastering AI Engineering: Prompt Frameworks for the Elite Developer: A Guide to Transforming AI into a $300/Hour Silicon Valley Force Multiplier

Mastering AI Engineering: Prompt Frameworks for the Elite Developer: A Guide to Transforming AI into a $300/Hour Silicon Valley Force Multiplier

Complete Prompt Master Manual: Essential Prompts for Coding, Design, Writing & More

Complete Prompt Master Manual: Essential Prompts for Coding, Design, Writing & More

Expressive Emojis: Adding Playful Expressions with HTML, CSS & JS

Expressive Emojis: Adding Playful Expressions with HTML, CSS & JS

MorphAI (AI Face Morphing Platform)

MorphAI (AI Face Morphing Platform)

Olipasto

Olipasto

Dither Studios

Dither Studios

Motion Trace: Capturing Movement as Living Memory

Motion Trace: Capturing Movement as Living Memory

Bauform Generator - A Generative Bauhaus Pattern System

Bauform Generator - A Generative Bauhaus Pattern System

Pixeloid 3d - Transform Images into Dynamic Geometric Art

Pixeloid 3d - Transform Images into Dynamic Geometric Art

Interactive Ragdoll Physics For Stress Relief

Interactive Ragdoll Physics For Stress Relief

Ambient Curtain Flow

Ambient Curtain Flow

3D Embossing Effect - Embossing that enhances depth, texture, and detail

3D Embossing Effect - Embossing that enhances depth, texture, and detail

Jixaw

Jixaw

Investigating Human Silhouettes: A Study of Live Human Pin Art Installations

Investigating Human Silhouettes: A Study of Live Human Pin Art Installations

ASCII Art Generator

ASCII Art Generator

The Hidden Potential of Face Morphing - Slides

The Hidden Potential of Face Morphing - Slides

The Hidden Potential of Face Morphing

The Hidden Potential of Face Morphing

The Hidden Potential of Face Morphing

The Hidden Potential of Face Morphing

Olipasto

Olipasto Dither Studios

Dither Studios Motion Trace: Capturing Movement as Living Memory

Motion Trace: Capturing Movement as Living Memory